Binary vs. Gradient Thinking in Religion and Science: An Epistemic Analysis

I. Introduction

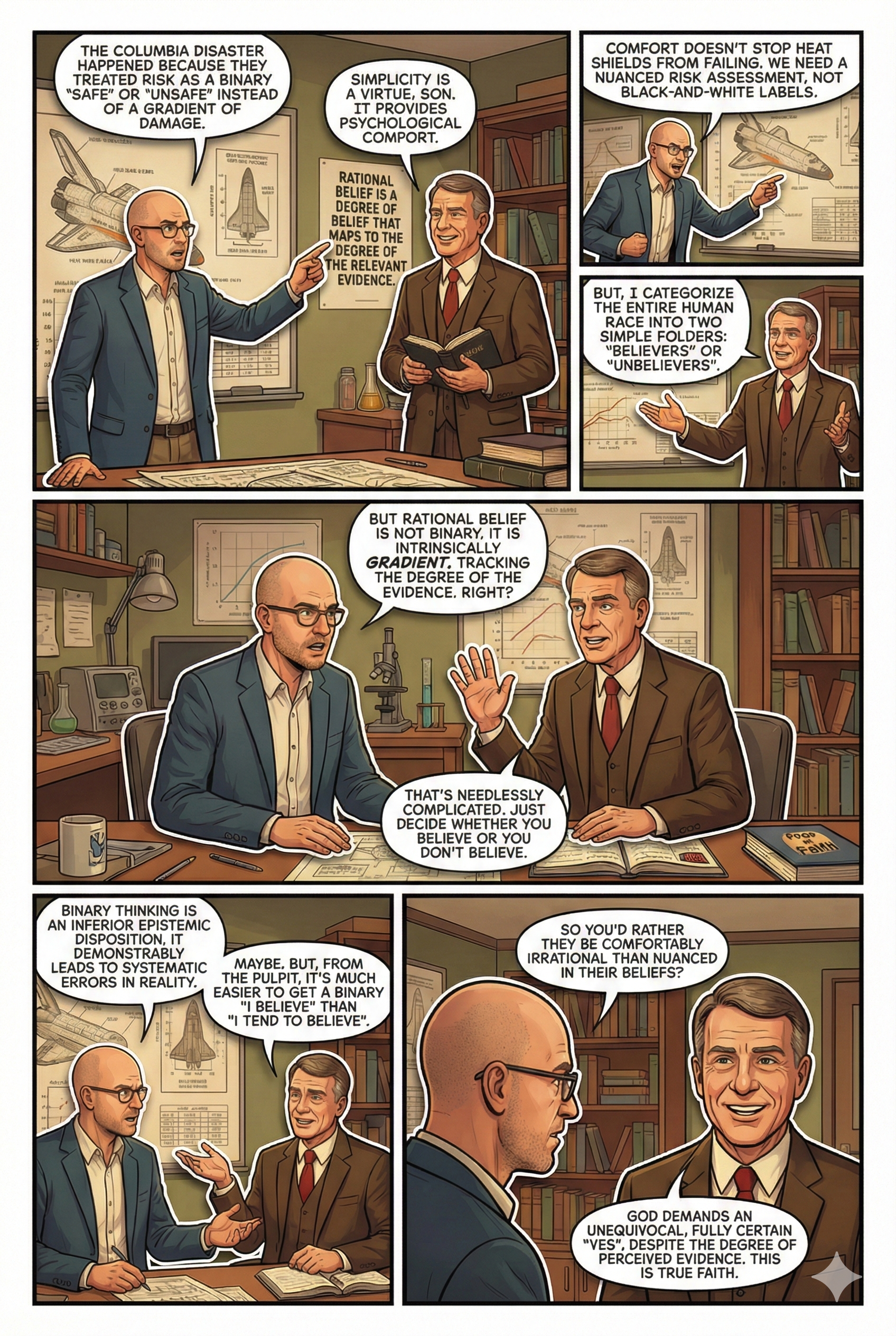

In 2003, the Space Shuttle Columbia disintegrated upon re-entry, killing all seven crew members. Investigations revealed that a simple but catastrophic error contributed to the disaster: foam strikes on the shuttle’s wing were dismissed as either “safe” or “unsafe,” without a nuanced risk assessment. This binary approach to decision-making overlooked the complex realities of structural damage, leading to fatal consequences.

The tragedy highlights a fundamental flaw in human cognition. Our brains are hardwired to favor simplicity, often interpreting the world in black-and-white terms—safe or dangerous, true or false, good or evil. This instinct for binary thinking offers psychological comfort but comes at a cost: it blinds us to complexity, leading to systematic errors in beliefs and decisions. Science, by contrast, thrives on gradient thinking, where evidence is weighed probabilistically, uncertainty is embraced, and conclusions remain provisional. The stakes couldn’t be clearer—our survival and understanding of the world depend on moving beyond simplistic categories toward more nuanced, accurate ways of thinking.

This essay examines how religion and science embody binary and gradient thinking, respectively, and argues that predictive success aligns with gradient thinking. Binary thinking, as an inferior epistemic disposition, leads to systematic errors in beliefs and decisions, limiting our capacity to understand and predict the world accurately.

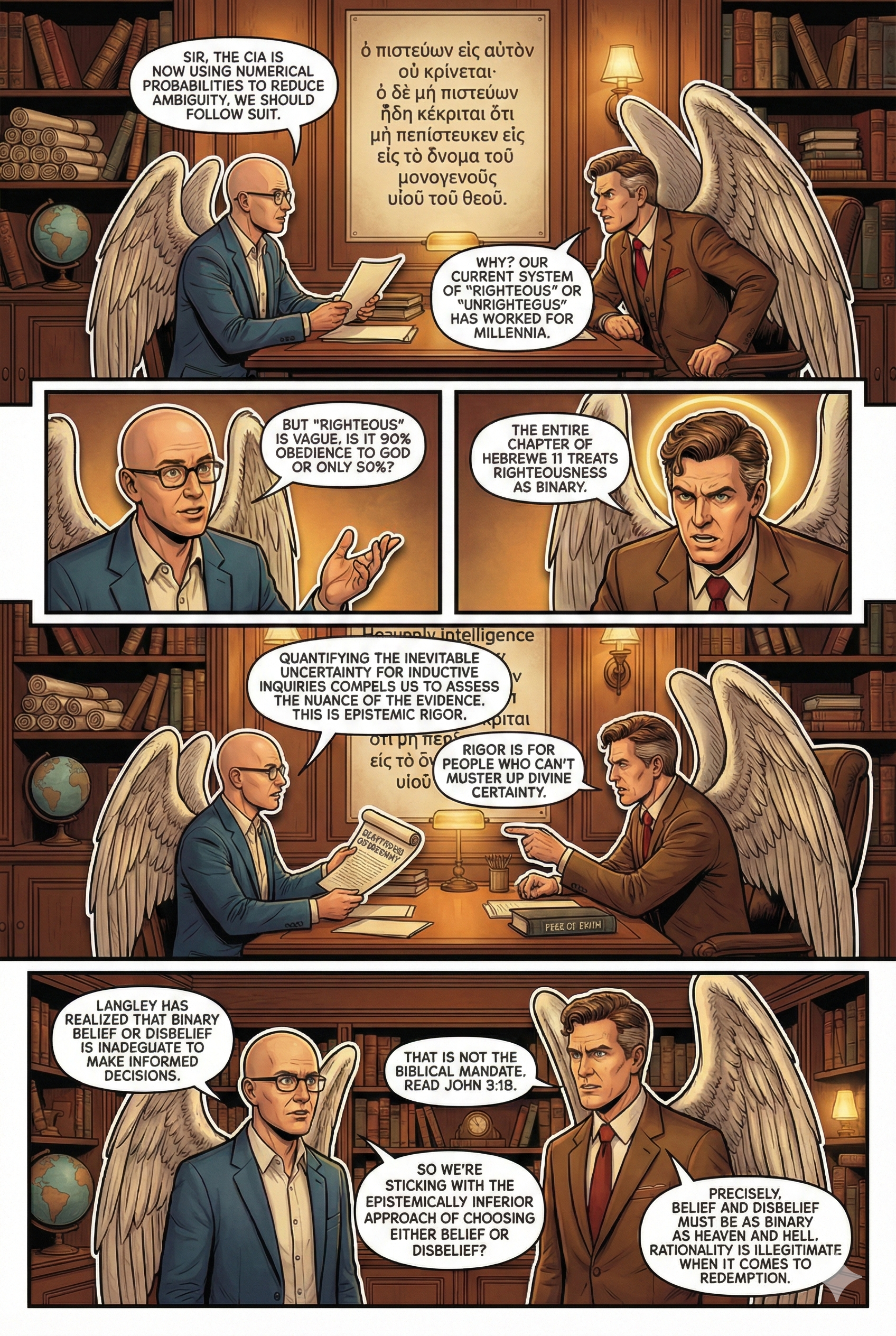

CIA Analysts and their Move Away from Binary Conclusions

In recent years, the Central Intelligence Agency (CIA) has encouraged its analysts to move away from binary conclusions and instead assign explicit probabilities to their assessments. This shift towards probabilistic reasoning aims to enhance the precision and clarity of intelligence reports.

Background

Historically, intelligence assessments often relied on qualitative terms—such as “likely” or “unlikely”—to convey the probability of events. However, these terms can be ambiguous and subject to varying interpretations. To address this, the CIA has promoted the use of numerical probabilities, enabling a more standardized and transparent communication of uncertainty. This approach aligns with practices in other fields, such as climate science and risk assessment, where quantifying uncertainty is crucial for informed decision-making.

Advantages of Probabilistic Assessments

- Enhanced Clarity and Precision: Assigning numerical probabilities reduces ambiguity inherent in qualitative descriptors. Decision-makers can better understand the analyst’s degree of certainty, leading to more informed and nuanced policy decisions.

- Improved Analytical Rigor: Quantifying probabilities compels analysts to critically evaluate the evidence and consider a range of outcomes. This process fosters a more thorough examination of assumptions and potential biases, leading to more robust analyses.

- Facilitates Communication and Consistency: A standardized probabilistic framework ensures that both analysts and consumers of intelligence have a shared understanding of terms and their associated likelihoods. This consistency enhances the credibility and usability of intelligence products.

- Supports Decision-Making Under Uncertainty: Policy decisions often involve weighing risks and benefits under uncertain conditions. Probabilistic assessments provide a nuanced view of potential scenarios, enabling decision-makers to assess the likelihood of various outcomes and plan accordingly.

Implementing probabilistic assessments is not without challenges. It requires training analysts in statistical reasoning and ensuring that both producers and consumers of intelligence are comfortable with probabilistic information. Despite these hurdles, the shift towards probabilistic assessments represents a significant advancement in the pursuit of more accurate and actionable intelligence.

The Rise and Success of Prediction Markets

Prediction Markets: Harnessing Collective Wisdom Through Gradient Thinking

What Are Prediction Markets?

Prediction markets are platforms where individuals can trade contracts based on the outcomes of future events. Participants buy and sell shares in specific outcomes—such as “Will Candidate X win the next election?”—and the price of each share reflects the collective belief about the probability of that event occurring. If the event happens, the share pays out at a set value (usually $1); if not, it becomes worthless.

Unlike traditional betting markets, which often focus on entertainment, prediction markets serve as real-time aggregators of collective knowledge and expectations. Famous examples include the Iowa Electronic Markets, PredictIt, and corporate platforms like Google’s internal prediction market.

The Utility of Prediction Markets in the Public Domain

Prediction markets offer unique advantages over traditional forecasting methods:

- Aggregated Expertise:

Rather than relying on a handful of experts, prediction markets tap into the “wisdom of the crowd.” Participants bring diverse knowledge, whether from deep expertise or timely insights, contributing to more accurate forecasts. - Dynamic and Self-Correcting:

As new information becomes available, market participants adjust their trades, causing prices to shift and reflect updated probabilities. This real-time adjustment mechanism ensures that the market stays current and responsive. - Incentivized Honesty:

Participants have a financial stake in being right. This creates a natural incentive to forecast accurately rather than express personal biases or ideological preferences. - Applications Across Fields:

- Politics: Predicting election outcomes with often higher accuracy than polls.

- Economics: Gauging future inflation rates or stock market movements.

- Public Health: Forecasting disease outbreaks or vaccine uptake rates.

- Corporate Strategy: Companies use internal prediction markets to anticipate product launch success or project timelines.

Intrinsic Dependence on Gradients and Induction

Prediction markets thrive on gradient thinking and are fundamentally inductive in nature:

- Continuous Probabilistic Evaluation:

Instead of presenting binary forecasts (“Yes, this will happen” or “No, it won’t”), prediction markets express beliefs along a probability gradient. A share priced at $0.70 for an outcome implies a 70% chance of that event occurring—reflecting the collective, inductive judgment of market participants. - Inductive Reasoning at Scale:

Participants in prediction markets constantly analyze incoming data—news reports, expert analyses, social trends—and adjust their expectations accordingly. Each trade is an inductive act, updating beliefs based on evidence and shifting probabilities to better reflect the likely outcome. - Error Correction Through Gradients:

If new evidence emerges that challenges the market’s current consensus, prices adjust smoothly rather than in an abrupt, binary fashion. For example, in an election market, if a candidate experiences a scandal, their share price may gradually decline as participants weigh the impact, reflecting a probabilistic adjustment rather than an all-or-nothing shift.

Why Prediction Markets Outperform Binary Models

Traditional forecasting often falls into binary traps—declaring outcomes as simply “likely” or “unlikely,” without quantifying degrees of certainty. This approach masks the nuances of uncertainty and ignores the probabilistic nature of real-world events.

Prediction markets, in contrast, inherently respect the gradient structure of uncertainty. They model not whether something will happen, but how likely it is to happen. This makes them not just tools for prediction but also for understanding complex systems where multiple variables interact unpredictably.

The Bigger Picture: Prediction Markets as Epistemic Tools

At their core, prediction markets serve as epistemic instruments, refining collective beliefs through inductive reasoning and gradient assessment. They demonstrate how probabilistic thinking—when applied systematically—can outperform even expert judgment.

In a world often dominated by binary conclusions and overconfident assertions, prediction markets remind us that the truth usually lies on a continuum. By embracing gradient thinking, they offer not only better forecasts but also a deeper appreciation for the complexities of predicting the future.

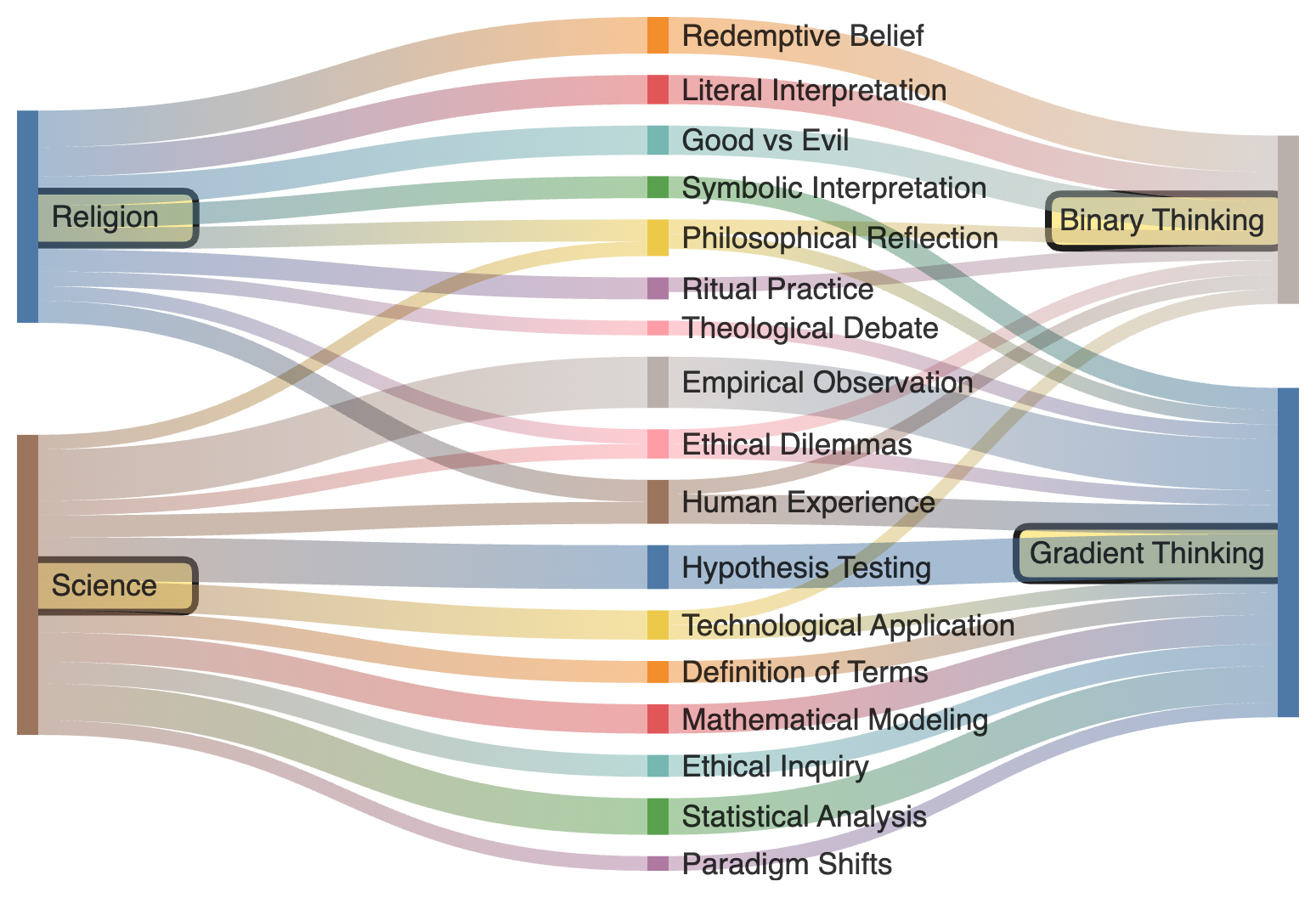

II. Understanding Binary and Gradient Thinking

A. Definition of Binary Thinking

Binary thinking reduces complex concepts into mutually exclusive categories. It divides reality into clear-cut labels: good vs. evil, true vs. false, believer vs. heretic. This approach appeals to human cognitive biases, providing certainty and simplifying decision-making. In religion, binary thinking manifests through redemptive beliefs, literal interpretations of sacred texts, and clear moral dichotomies. The allure lies in its clarity—offering seemingly absolute answers in an ambiguous world.

However, binary thinking is epistemically weak. By forcing nuanced realities into rigid categories, it disregards complexities and gradients, leading to flawed beliefs and poor decisions. It fails to account for the probabilistic and often ambiguous nature of most real-world phenomena.

B. Definition of Gradient Thinking

Gradient thinking embraces complexity and uncertainty. It acknowledges that most phenomena exist on a spectrum rather than within fixed categories. Rather than asking, “Is this true or false?” gradient thinking asks, “To what degree is this supported by evidence?“

Science epitomizes gradient thinking through hypothesis testing, statistical analysis, and probabilistic modeling. Conclusions in science are rarely absolute; they are tentative, open to revision with new evidence. This approach allows for more accurate representations of reality and superior predictive capabilities. It aligns with an epistemic model where beliefs are proportionate to evidence, reducing the likelihood of systematic errors.

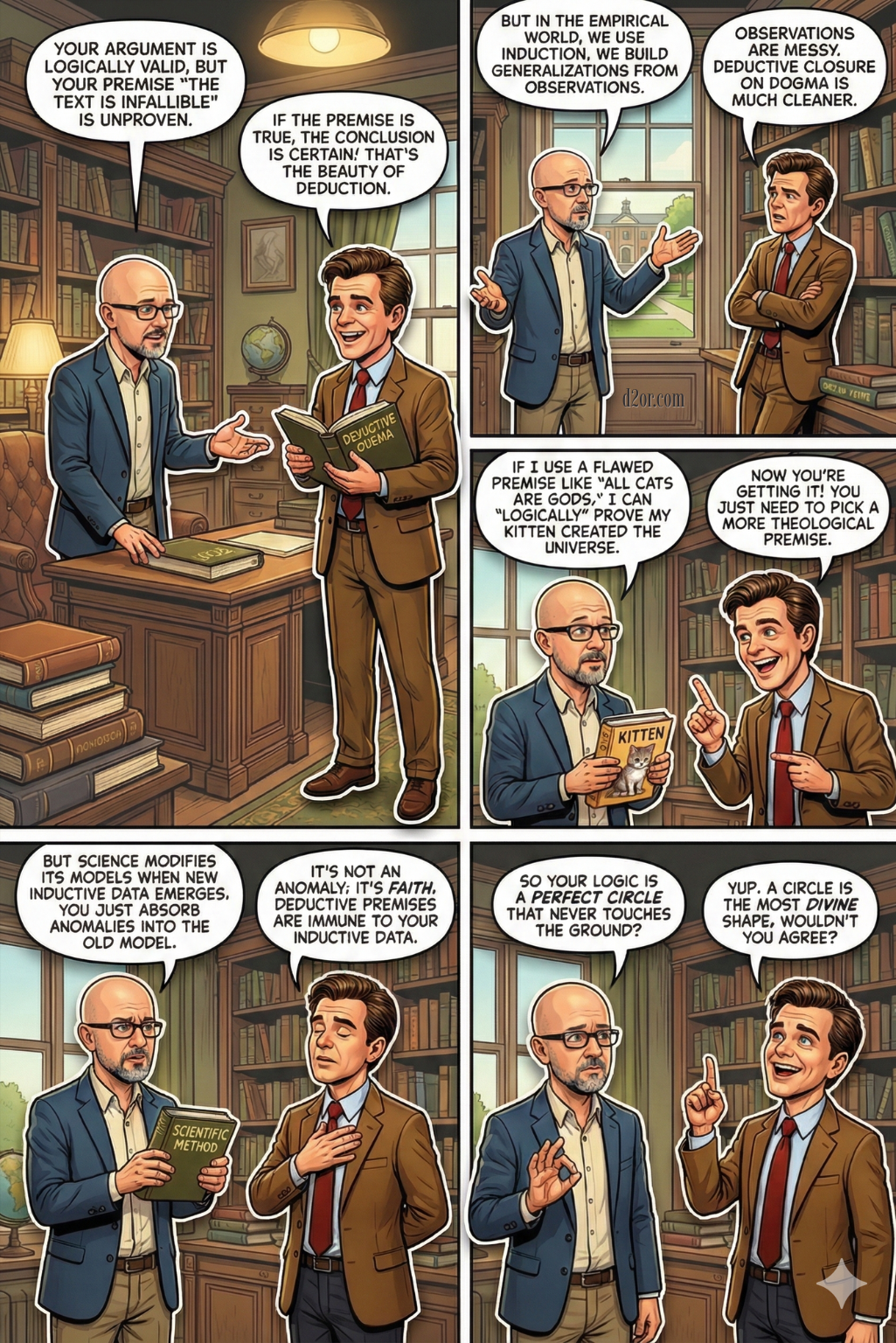

III. Inductive and Deductive Reasoning: The Epistemic Divide

A. Deductive Reasoning and Binary Thought

Deductive reasoning starts with general premises and derives specific conclusions. When the premises are valid and the logic is sound, the conclusion is necessarily true. For example:

- P1: All humans are mortal.

- P2: Socrates is a human.

- Conclusion: Socrates is mortal.

While deductive reasoning is logically rigorous, it assumes the truth of its premises. In religious contexts, deductive reasoning often relies on dogmatic premises (e.g., “The sacred text is infallible“), leading to binary conclusions. This rigid structure leaves little room for ambiguity, reinforcing binary thinking.

B. Inductive Reasoning and Gradient Thought

Inductive reasoning, by contrast, builds generalizations from specific observations. It operates probabilistically, acknowledging uncertainty. For example, observing that the sun rises daily leads to the conclusion that it will likely rise tomorrow—though not with absolute certainty.

Science is grounded in inductive reasoning. Hypothesis testing, statistical modeling, and empirical observation all rely on gradient thought processes. By continuously updating beliefs based on new evidence, inductive reasoning allows for flexible, accurate understandings.

C. Appropriate Contexts for Deductive and Inductive Reasoning

While inductive reasoning is essential for exploring and understanding the empirical world, deductive reasoning remains indispensable in specific contexts:

- Deductive Reasoning is Appropriate When:

- The premises are well-established and uncontroversial.Engaging in formal logic, mathematics, or structured philosophical arguments. Deriving conclusions from existing laws or definitions (e.g., in legal reasoning or geometry). Verifying internal consistency within a conceptual framework.

- Inductive Reasoning is Appropriate When:

- Dealing with empirical observations and data.Investigating natural phenomena where variables are complex and not fully understood. Making probabilistic predictions in uncertain or evolving contexts (e.g., weather forecasting, medical diagnoses). Formulating and testing hypotheses in scientific research.

D. Epistemic Consequences

Deductive reasoning, when applied to unexamined or flawed premises, can produce logically valid yet false conclusions—an epistemic pitfall prevalent in religious dogma. Inductive reasoning, though inherently uncertain, offers a more reliable approach to understanding the world by aligning beliefs with the weight of evidence. Gradient thinking, intrinsic to inductive reasoning, allows for adaptive, error-reducing models of knowledge, making it superior in contexts where complexity and uncertainty dominate.

E. Science as an Intrinsically Inductive Enterprise

While science employs both deductive and inductive reasoning, its foundational strength lies in its intrinsically inductive nature. The empirical method—observation, experimentation, and hypothesis testing—is fundamentally inductive, aiming to build generalized knowledge from specific data points. Science does not claim absolute truths but constructs models and theories that best explain observed phenomena based on accumulated evidence.

Consider the scientific method: researchers observe patterns, propose hypotheses, test them through experimentation, and revise their models based on the results. This process is iterative and probabilistic, with conclusions framed in terms of likelihood rather than certainty. Even well-established scientific theories, like evolution or germ theory, remain open to refinement or revision as new evidence emerges.

Statistical analysis, a core component of scientific inquiry, further underscores this inductive foundation. Scientists rely on probabilities, confidence intervals, and margins of error to interpret data. For example, a clinical trial demonstrating that a new drug reduces symptoms in 85% of patients does not claim universal efficacy but indicates a high probability of effectiveness.

Even deductive elements in science—such as mathematical modeling or logical reasoning—are often built upon inductively derived premises. A climate model may use deductive logic to predict future temperatures, but its foundational assumptions about greenhouse gas effects are grounded in empirical observations.

This intrinsic reliance on inductive reasoning makes science particularly adept at handling complexity and uncertainty. By continuously updating models in response to new data, science embodies gradient thinking, reducing epistemic errors and enhancing predictive success.

The Dangers of Binary Thinking

Binary thinking poses significant risks by simplifying complex realities into rigid categories, leading to flawed beliefs and poor decision-making. Its dangers are particularly evident in how it fosters lazy thinking, promotes inflexible models of reality, and inhibits the ability to listen and learn from others.

- Encouragement of Lazy Thinking:

Binary thinking appeals to cognitive shortcuts, allowing individuals to avoid the effort required for deep analysis. By framing issues as either right or wrong or good or evil, it discourages critical thinking and the nuanced evaluation of evidence, leading to oversimplified conclusions. - Rigid Models of Reality That Fail Under Scrutiny:

By imposing strict categories on complex phenomena, binary thinking creates models of reality that lack adaptability. These rigid frameworks often break down when applied to dynamic, real-world situations, leading to epistemic errors and flawed decisions that fail to account for nuance and uncertainty. - Inhibition of Listening and Learning:

Binary frameworks foster an “us vs. them” mentality, making it difficult to engage openly with differing perspectives. When ideas are labeled as entirely right or wrong, it becomes challenging to genuinely listen, consider alternative viewpoints, or integrate new information—ultimately stifling learning and intellectual growth.

In essence, binary thinking not only undermines the accuracy of beliefs but also limits cognitive flexibility and interpersonal understanding, leading to poorer decisions and reduced capacity for personal and collective growth.

Binary Thinking and the Illusion of a God-Like Perspective

Binary thinking mirrors a God-like perspective by implicitly assuming an absolute, all-knowing stance—ignoring the intrinsic human fallibility and the limits of subjective experience. In its quest for definitive answers, binary thinking bypasses the complexities of reality, adopting an unrealistic view that pretends to transcend human limitations.

- Assumption of Absolute Knowledge:

Binary thinking operates as if complete, objective knowledge is attainable, categorizing ideas, people, or events as entirely right or wrong, good or evil. This approach mirrors a God-like omniscience, disregarding the fact that human understanding is inherently partial and fallible. In reality, our knowledge is shaped by limited data, biases, and context-dependent interpretations, making absolute judgments both unjustified and misleading. - Denial of Human Fallibility:

By promoting rigid conclusions, binary thinking masks the inherent uncertainty that accompanies all human reasoning. It dismisses the probabilistic and tentative nature of most claims, fostering overconfidence and the illusion of certainty. This creates a dangerous epistemic posture where individuals trust their judgments as infallible, akin to adopting a divine vantage point that humans cannot justifiably claim. - Obscuring Subjectivity and Context:

Human perspectives are deeply shaped by cultural, emotional, and cognitive filters. Binary thinking overlooks this subjectivity, treating individual judgments as universally valid. This erases the nuanced differences in how people experience and interpret reality, promoting the false belief that one’s perspective aligns with an objective, all-encompassing truth—again echoing a God-like stance.

In this way, binary thinking not only simplifies complex realities but also inflates human epistemic authority, fostering the illusion that we can access absolute truths. By ignoring our cognitive limitations and the probabilistic nature of knowledge, it undermines humility, adaptability, and the continuous learning necessary for a more accurate understanding of the world.

Hi Christopher, I just sent you a response by email. Cheers.