- Common Critiques in the Literature.

- 1) Gettier/“accidentally true” counterexamples (sufficiency failure)

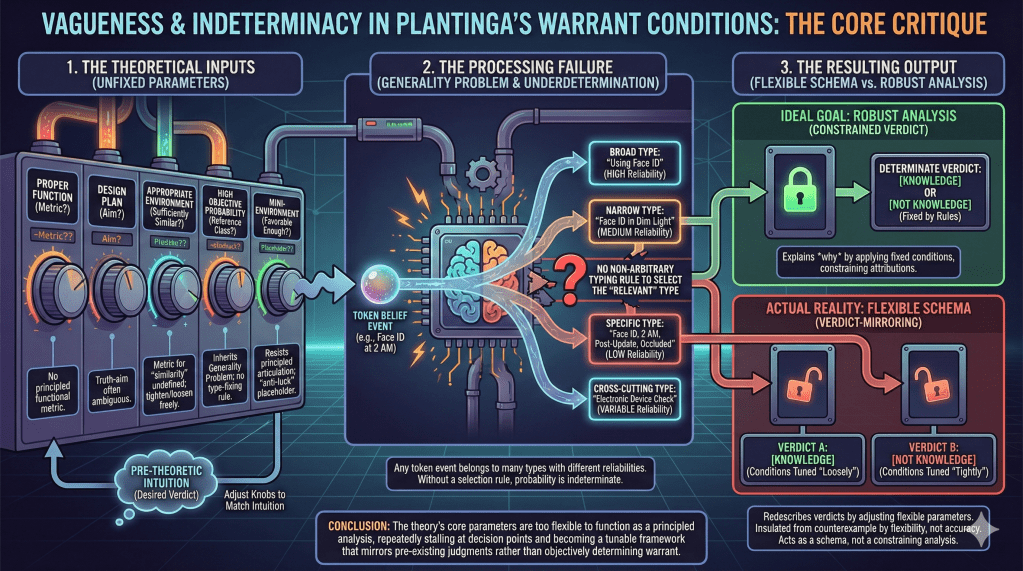

- 2) “Favorable cognitive mini-environments” as an ad hoc tuning knob

- 3) Bootstrapping, “easy knowledge,” and epistemic circularity

- 4) Design plan / proper function: the naturalization dilemma and teleology baggage

- 5) The internalist/evidentialist complaint: Plantinga brackets “reasons” rather than accounting for them

- 6) Etiology and Swampman: does warrant require the right causal history?

- 7) Vagueness and Indeterminacy in the Warrant Conditions

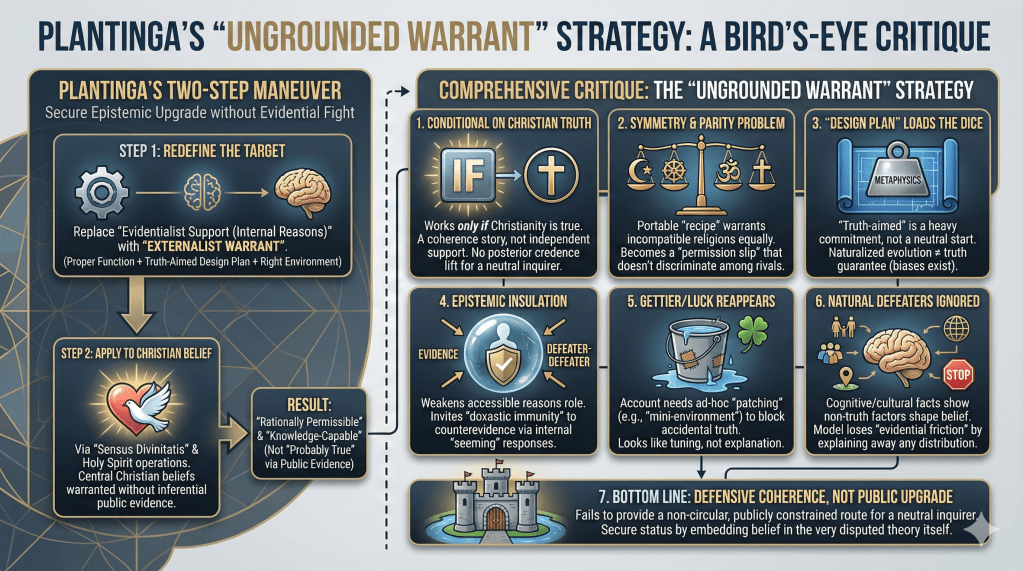

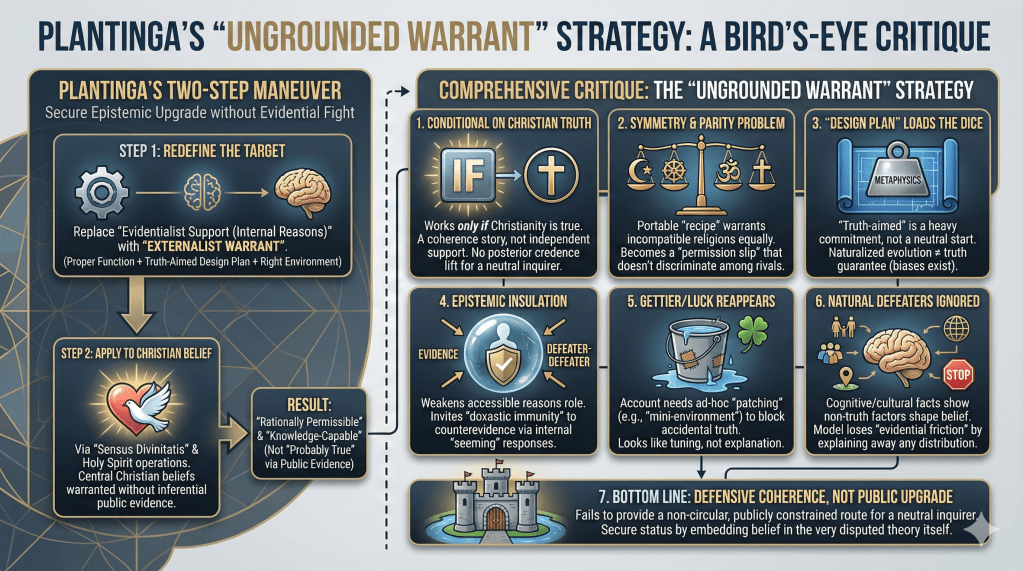

- ◉ ◉ ◉ Bird’s-eye view: what Plantinga is doing

Common Critiques in the Literature.

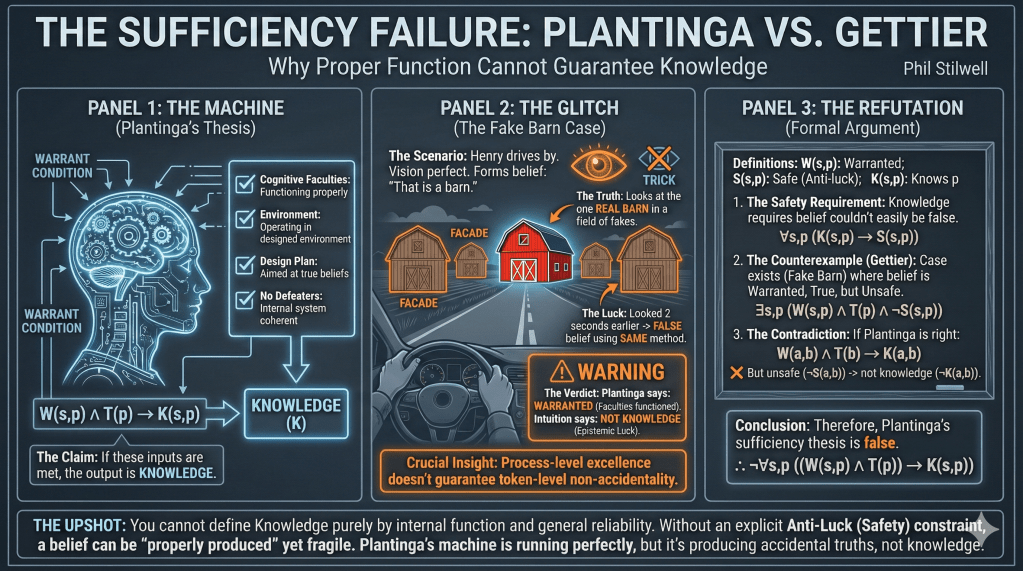

1) Gettier/“accidentally true” counterexamples (sufficiency failure)

Plantinga’s warrant program aims to give sufficient conditions for knowledge: true belief + warrant, where warrant is conferred by properly functioning cognitive faculties operating in the right environment under a truth-aimed design plan. The decisive objection is that these conditions can still permit beliefs that are true only by coincidence—the core Gettier phenomenon. In standard “local deception” cases (e.g., fake-barn style setups), an agent’s faculties can be functioning normally, with no defeaters, yet the belief’s truth is fragile: formed the same way in a nearby situation, it would easily have been false. That modal fragility is precisely what defeats knowledge in Gettier cases, and it can persist even when the belief-forming process is generally reliable. So, unless Plantinga adds an explicit anti-luck constraint (safety-style or equivalent), his conditions remain extensionally too weak: they classify some accidentally true beliefs as knowledge. But once the anti-luck constraint is added, the account’s distinctive proper-function machinery no longer does the decisive work; warrant becomes “proper function + an extra non-accidentality filter,” and the program’s original sufficiency claim is abandoned in its unamended form.

Plantinga’s central aim is to give sufficient conditions for knowledge by replacing (or at least de-centering) internalist “justification” with warrant: a true belief has warrant when it is produced by properly functioning cognitive faculties, operating in an appropriate environment, according to a design plan aimed at truth, with a sufficiently high “objective probability” of truth, and with no undefeated defeaters. (Internet Encyclopedia of Philosophy)

The best-known core objection is that—even if you grant all of this machinery—it still does not rule out the phenomenon that Gettier made unavoidable: a belief can be true, well-produced, and yet true only by coincidence. Put more sharply:

✓ Gettier teaches that what blocks knowledge is not merely “bad reasons,” but epistemic luck: the belief is true, but it could easily have been false given how it was formed. (Stanford Encyclopedia of Philosophy)

✓ Externalist theories are not automatically immune; Gettier-style luck can infect beliefs even when the forming process is generally reliable. (Stanford Encyclopedia of Philosophy)

A canonical way of pressing this against Plantinga is to construct cases where all of his favored positive factors are present—proper function, truth-aimed cognitive design, ordinary operation—yet the belief’s truth is still fragile in the relevant way.

A standard template (Fake Barn / local-deception cases).

An agent looks at what appears to be a barn and forms the belief “That’s a barn.” Their vision is functioning normally; no intoxication; no weird lighting; no defeaters; the belief is formed in the ordinary perceptual way. Unbeknownst to them, the area is filled with barn façades, but by luck they are looking at the single real barn. Intuitively, the belief is true but not knowledge because the agent could very easily have been wrong while forming the belief in exactly the same way (just by looking a few degrees left). This is epistemic luck in a paradigmatic form.

Now notice what the case is designed to show about Plantinga’s sufficiency claim:

- Process-level excellence doesn’t guarantee token-level non-accidentality.

Plantinga’s conditions are fundamentally process- and system-oriented: proper function, truth-aiming design, and (often) a kind of objective probability. But Gettier cases exploit a gap: a belief can be produced by a process that is, in general, functioning as it should, and yet the particular token belief’s truth depends on a coincidence in the local setup. That is exactly what “accidentally true” means in this literature. (PhilArchive) - “High objective probability” doesn’t automatically kill luck unless it is tied to the right modal profile.

Even if you build “high objective probability” into warrant, you can still have: “Usually this method in this broad environment yields truth,” while this token belief is true only because the agent happened to be looking at the one non-deceptive object. Gettier luck is fundamentally about the nearby-error structure (“easily false”), which is why much post-Gettier work gravitates toward anti-luck constraints like safety or sensitivity. (Stanford Encyclopedia of Philosophy) - So: Plantinga needs an added anti-luck constraint, or else the account remains extensionally wrong.

And once you add such a constraint, critics argue, the distinctive work is no longer being done by “proper function” itself. Rather, the heavy lifting shifts to a “de-Gettierizing” clause (e.g., favorable mini-environments), which invites the next criticism: the repairs begin to look like parameter-tuning rather than an independently motivated analysis. This is exactly the dialectic Chignell and Crisp develop: proper functionalism, like other externalisms, remains vulnerable to accidentally-true counterexamples; and the subsequent amendments face principled difficulties. (PhilArchive)

The upshot (stated with maximal precision).

The Gettier objection to Plantinga’s warrant program is not “he forgot about Gettier.” It’s that the program’s core ingredients—proper function + right environment + truth-aimed design + no defeaters—do not, by themselves, entail the one feature Gettier cases force any adequate theory to secure: the belief’s truth must not be a matter of epistemic coincidence given the method and circumstances of formation. If Plantinga’s conditions allow even a stable class of beliefs that are true yet “easily false” in nearby situations formed in the same way, then the conditions are not sufficient for knowledge. (Stanford Encyclopedia of Philosophy)

- Phil: Let’s use an ordinary case. You’re driving through a rural area and see what looks like a barn. Unbeknownst to you, the area is packed with barn facades, and there is only one real barn.

- Plantinga: Fine. I look at the structure and form the belief, “That’s a barn.”

- Phil: Your vision is working normally, you are sober, lighting is good, no one is tricking you directly, and you have no defeaters. So your faculties are functioning properly in the normal way.

- Plantinga: Yes, that sounds like proper function.

- Phil: And you happen, by sheer luck, to be looking at the one real barn. So your belief is true.

- Plantinga: Then it is true.

- Phil: Now here is the key question. Do you know it is a barn?

- Plantinga: If my belief is true and produced by properly functioning faculties in the right environment, that looks like knowledge.

- Phil: But in this exact setup, you could very easily have looked two seconds earlier at a facade and formed the same belief in the same way, and you would have been wrong. The belief is true, but true by coincidence.

- Plantinga: It is an unfortunate case, but the belief is still formed normally.

- Phil: That is exactly the problem. Your account is designed to make normal formation plus truth sufficient. Yet this case shows that normal formation plus truth can still be accidental truth. The method does not secure non-accidentality.

- Plantinga: I can add a condition about the local environment being favorable.

- Phil: Then your original sufficiency claim was false. You needed an additional anti-luck filter. And notice what you just did: you added a patch to block the counterexample, not a consequence of the original proper-function story.

- Plantinga: But surely knowledge requires that the environment not be misleading.

- Phil: Right, but your base conditions did not state that in a way that rules out this case. In the barn-facade county, everything inside the subject’s head is working properly, and the belief is true, and yet the belief is still the wrong kind of true for knowledge because it is easily false in nearby, ordinary variations of the same situation.

- Plantinga: So you are saying proper function plus truth does not guarantee knowledge.

- Phil: Exactly. The case forces a choice. Either you say the driver knows, which makes knowledge compatible with blatant coincidence, or you deny knowledge, which means proper function plus truth was not sufficient. That is the sufficiency failure in one clean, everyday example.

Argument for critique 1: Gettier style accidental truth shows Plantinga style sufficiency fails

Key predicates and intended readings

Annotation: Subject s’s belief that p satisfies Plantinga’s warrant conditions (proper function, truth aimed design plan, suitable environment, no undefeated defeaters, and so on).

Annotation: Proposition p is true.

Annotation: Subject s knows that p.

Annotation: Subject s’s belief that p is safe, meaning that in nearby situations where s forms the belief that p in the same way, p is not false.

Primary argument (natural deduction style)

Annotation: Plantinga’s sufficiency thesis: true belief plus Plantinga warrant is enough for knowledge.

Annotation: Anti luck principle: knowledge requires safety.

Annotation: Gettier style setup exists (for example, fake barn style local deception) in which the belief is warranted and true but not safe.

Annotation: Fix one particular subject and proposition from the existence claim.

Annotation: Instance of the safety requirement for this particular case.

Annotation: The belief is unsafe in the Gettier style case.

Annotation: From the safety requirement and the lack of safety, knowledge is ruled out in this case.

Annotation: Instance of Plantinga’s sufficiency thesis for this particular case.

Annotation: The belief is warranted in Plantinga’s sense and the proposition is true in this case.

Annotation: From Plantinga’s sufficiency instance and the warranted true belief, knowledge follows.

Annotation: Contradiction, since the same case yields both knowledge and not knowledge.

Annotation: Therefore, Plantinga’s sufficiency thesis cannot be maintained together with the safety requirement and the existence of Gettier style unsafe warranted true beliefs; so, given those widely accepted constraints, the sufficiency thesis fails.

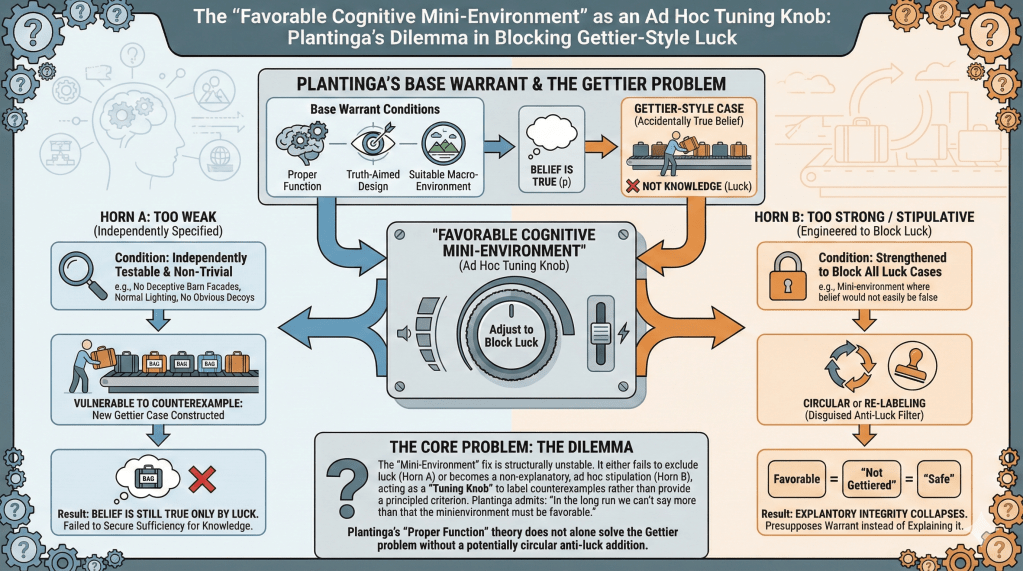

2) “Favorable cognitive mini-environments” as an ad hoc tuning knob

Plantinga introduces the favorable cognitive mini-environment condition to block Gettier-style “accidentally true” beliefs—i.e., cases where proper function in the right general environment still yields truth only by coincidence. But the fix is structurally unstable: either “favorable” is specified in an independently testable way, in which case it remains vulnerable to counterexample (Botham argues Plantinga’s own proposed favorability condition does fall prey to counterexample, and that a natural attempted repair fails too), or it is strengthened until it blocks all the luck cases, in which case it becomes indistinguishable from a stipulative anti-luck filter (“favorable = not Gettiered”) rather than an explanatory condition. Plantinga himself signals the problem when he concedes that “in the long run we can’t say more than that the minienvironment must be favorable,” which amounts to admitting that the key constraint may resist principled articulation. Chignell sharpens the charge by arguing that Plantinga’s amendments treat mini-environments in a way that yields systematic misclassifications—because the concept ends up functioning like a too-flexible parameter rather than a constraint with independent content.

Plantinga introduces the favorable cognitive mini-environment condition to handle exactly the pressure generated by Gettier-style cases of “accidentally true” belief: even if a belief is produced by properly functioning faculties in the right general environment, it can still be true only by coincidence. The mini-environment move is meant to add a local constraint: the immediate cognitive setting must be “favorable” for the relevant exercise of one’s cognitive powers. Botham describes this as Plantinga’s explicit strategy (Plantinga 1996; 2000) for securing warrant sufficient for knowledge. (Springer)

The most-cited objection is not merely “this is vague,” but that the mini-environment condition faces a dilemma that threatens either extensional adequacy or explanatory integrity.

(A) If “favorable” is specified independently and non-trivially, it tends to be too weak.

Suppose Plantinga offers a substantive, independently motivated test for favorability (i.e., a condition you can state without referencing knowledge, warrant, or “not being Gettiered”). Then it becomes vulnerable to counterexample in the usual way: one can construct cases where the mini-environment satisfies the stated favorability condition, yet the resulting belief is still true only by luck. This is precisely the shape of Botham’s critique: Plantinga “specifies a condition required for a cognitive mini-environment’s favorability,” and Botham argues that the specified condition “falls prey to counterexample,” and that a natural attempted repair fails as well. (Springer)

The key point is structural: Gettier-luck can be engineered while keeping lots of “normal” features intact. Any favorability condition that is coarse-grained enough to be independently plausible will typically leave room for local luck—e.g., pockets of deception, atypical nearby error possibilities, “almost the same process in nearly the same setting would have produced a false belief.” If the condition is genuinely informative and not simply “and it’s not Gettiered,” then it will not automatically track every way the world can make a true belief epistemically accidental.

(B) If “favorable” is strengthened to block the counterexamples, it risks becoming circular or merely a re-labeling of anti-luck constraints.

Seeing this, a natural response is to tighten “favorable” until it excludes the luck cases. But here two problems appear:

- Circularity (or disguised circularity). If “favorable” is characterized in effect as “a mini-environment in which this belief, formed this way, would not easily have been false / would not be accidentally true,” then the account stops explaining warrant and begins presupposing the very property it was introduced to illuminate. Chignell’s discussion of Plantinga’s amendments is organized around this dialectic: Plantinga proposes a mini-environment clause to immunize the theory against “accidentally true” cases, and objectors respond that the clause can look satisfied even in the problem cases unless it is strengthened—at which point the worry is that it becomes too close to a “no accident” stipulation. (PhilArchive)

- Collapse into a general anti-luck principle (with “proper function” no longer doing the work). If you make favorability do what it has to do—rule out the relevant nearby-error structure—then you have, in substance, imported a modal anti-luck requirement of the sort developed elsewhere in the post-Gettier literature (e.g., safety-style constraints). At that point, the account’s explanatory center of gravity shifts: warrant sufficient for knowledge is being delivered primarily by the anti-luck condition, while “proper function” becomes largely an upstream reliability story. That may still be a coherent hybrid view, but it undercuts Plantinga’s distinctive promise that proper function in the right environment is the key to warrant. If the decisive work is done by a separate anti-luck filter, critics will reasonably ask why Plantinga’s additional metaphysical machinery is needed rather than a more direct anti-luck approach.

This dilemma is sharpened by a striking admission in Plantinga’s own discussion: he suggests that “in the long run we can’t say more than that the mini-environment must be favorable.” (Christian Classics Ethereal Library) If that is right—if favorability resists further principled specification—then the mini-environment clause starts to look like a placeholder for “whatever blocks Gettier luck here.” But that is exactly what critics mean by calling it a tuning knob: you can protect the theory from counterexample by declaring the mini-environment “unfavorable,” yet without an independently stated criterion, the move doesn’t constrain verdicts in a theoretically informative way.

The core complaint, then, is not that Plantinga is wrong to notice that luck is local. It’s that the “favorable mini-environment” fix threatens to be either:

- Too weak (if independently specified), allowing accidentally true beliefs to count as knowledge; or

- Too strong / too close to stipulation (if engineered to block the counterexamples), either becoming circular (“favorable = non-Gettier”) or collapsing into an imported anti-luck principle that does the real work.

Either horn is costly. On the first horn, Plantinga has not secured sufficiency for knowledge; on the second, he has secured sufficiency only by adding a clause whose content is either not independently characterizable or is best understood as a borrowed anti-luck constraint—leaving “proper function” as, at most, part of a larger package rather than the distinctive solution.

If you want, I can write #3 next in the same style (bootstrapping / easy knowledge), and I’ll tie it directly to why the mini-environment move doesn’t obviously rescue Plantinga from that externalist problem either.

- Phil: Let’s stay with the same rural drive, but now you add your fix: “the cognitive mini-environment has to be favorable.”

- Plantinga: Right. Even if the broad environment is fine, the local setup can be unfavorable in a way that defeats knowledge.

- Phil: Great. Now tell me what “favorable” means in ordinary terms that a normal person can apply without already knowing whether the belief counts as knowledge.

- Plantinga: Roughly, it means the conditions are such that the belief would not easily have been false when formed that way.

- Phil: Notice what you just did. You translated “favorable” into “not easily false,” which is basically the anti-luck condition we introduced to explain why the barn-facade case is not knowledge.

- Plantinga: That seems right. Knowledge should not be easily false.

- Phil: Then your mini-environment clause is not explaining warrant by proper function. It is silently importing an independent anti-luck rule and calling it “favorable.”

- Plantinga: But I can describe favorability more concretely, like “no deceptive barn facades around.”

- Phil: That is the other horn. If you make “favorable” concrete like that, I can generate a new ordinary case that satisfies your concrete rule but still produces accidental truth. I only have to shift the luck source.

- Plantinga: Give me an example.

- Phil: You’re at an airport baggage carousel. You believe “that bag is mine” because it matches your suitcase perfectly. No one is deceiving you, lighting is fine, and there are no obvious “decoy” bags intentionally planted. So your mini-environment condition, stated as “no deception and normal viewing,” is satisfied.

- Plantinga: Okay.

- Phil: But it turns out ten identical suitcases were purchased by attendees of the same conference. By sheer luck, you grabbed the one suitcase that actually is yours. Your belief is true, but it is true by coincidence.

- Plantinga: Then I would say the mini-environment was not favorable after all.

- Phil: Exactly. And that reveals the problem. When you face a counterexample, you can always reclassify the mini-environment as “not favorable.” But unless you have an independent test for favorability, that move is just “label the bad case as unfavorable.” It does not constrain anything.

- Plantinga: So you are saying “favorable” is either too weak or too close to a restatement of the verdict.

- Phil: Yes. If “favorable” is defined in ordinary, independently checkable terms, it will miss some luck cases. If you tighten it until it captures all luck cases, it becomes a disguised anti-luck clause: “favorable means not Gettiered.” Either way, the mini-environment repair functions as a tuning knob, not a principled explanatory condition.

Argument for critique 2: the favorable mini-environment clause is either too weak to block luck or it becomes an ad hoc anti-luck stipulation

Key predicates and intended readings

Annotation: Subject s’s belief that p satisfies Plantinga’s base warrant conditions prior to the mini-environment add-on (proper function, truth-aimed design plan, suitable macro-environment, no undefeated defeaters, and so on).

Annotation: Subject s’s belief that p is formed in a favorable cognitive mini-environment (the amended Plantinga condition intended to block Gettier-style luck).

Annotation: Proposition p is true.

Annotation: Subject s knows that p.

Annotation: Subject s’s belief that p is safe, meaning that in nearby situations where s forms the belief that p in the same way, p is not false.

Annotation: The mini-environment condition has independent content, meaning it is not merely a re-labeling of knowledge or of an anti-luck requirement such as safety.

Primary argument in two lemmas

Lemma A: any independently stated mini-environment condition still permits Gettier-style luck

Annotation: Knowledge requires safety.

Annotation: For any independently specified mini-environment condition, there exists a Gettier-style case satisfying the base warrant conditions and the mini-environment condition and truth, yet lacking safety.

Annotation: Fix an arbitrary candidate mini-environment condition that is independently specified.

Annotation: From the previous universal premise, a Gettier-style counterexample exists for this independently specified condition.

Annotation: Fix one such counterexample instance.

Annotation: Instance of the safety requirement for this case.

Annotation: The belief is unsafe in the counterexample case.

Annotation: From safety being required and safety failing, the belief is not knowledge in the counterexample case.

Lemma B: if the mini-environment clause is strengthened to guarantee knowledge, it becomes an anti-luck stipulation rather than an explanatory constraint

Annotation: Plantinga’s amended sufficiency thesis: base warrant plus favorable mini-environment plus truth is enough for knowledge.

Annotation: From the amended sufficiency thesis and the safety requirement, it follows that any case satisfying the amended antecedent must be safe.

Annotation: Combining Lemma A and Lemma B yields the dilemma: if the amended sufficiency thesis is maintained, the mini-environment condition cannot remain independently specified; it must effectively build in an anti-luck constraint, making it ad hoc in the sense of lacking independent epistemic content.

Conclusion of the critique

Annotation: Therefore, if the mini-environment condition is independently stated, it cannot secure sufficiency for knowledge because Gettier-style unsafe warranted true beliefs remain possible.

Annotation: Conversely, if the mini-environment condition is strengthened so that the amended sufficiency thesis holds, then the condition no longer has independent explanatory force and functions as a built-in anti-luck filter.

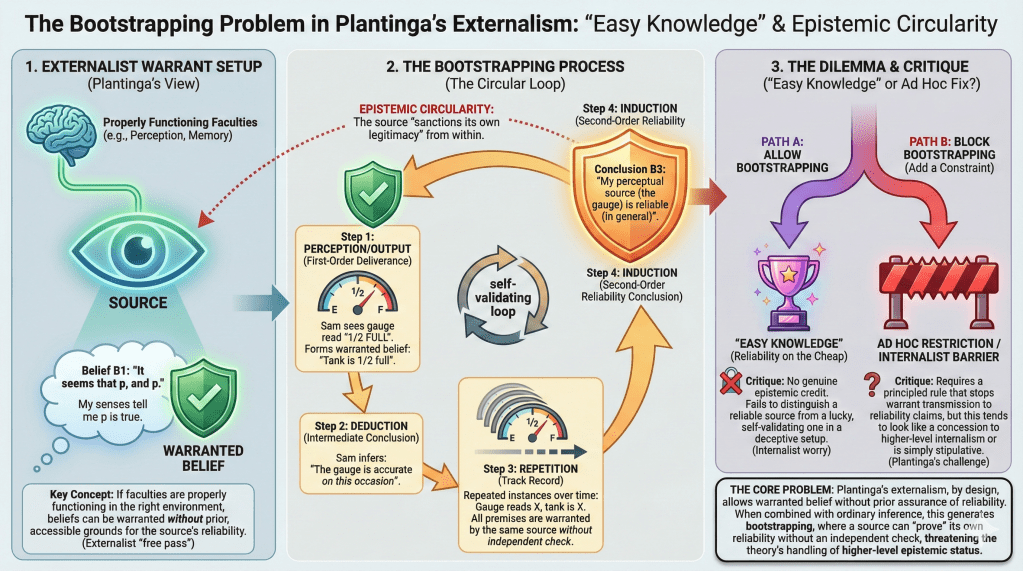

3) Bootstrapping, “easy knowledge,” and epistemic circularity

Plantinga’s warrant program is an externalist view: if your faculties are properly functioning in the right conditions, they can yield warranted beliefs without your first having reflective, independent grounds that the source is reliable. That externalist strength generates the bootstrapping problem: from many first-order deliverances of a source (perception, memory, an instrument), you can infer—via a “track record” argument using outputs of that very source—that the source is reliable, even though the procedure is epistemically circular (it “sanctions its own legitimacy”). The classic gas-gauge case (Vogel) is designed to show exactly this: reliabilist-style theories appear to make higher-level reliability knowledge too easy, because the same self-validating pattern would be available from within even in setups where an independent check is precisely what’s missing. Unless Plantinga adds a principled barrier to warrant transmission in these self-ratifying inferences, his view inherits the same structural defect; but if he does add such a barrier, it tends to look like either a concession to higher-level internalist demands or an ad hoc restriction introduced solely to block the embarrassing result.

The bootstrapping objection targets a distinctive vulnerability of externalist accounts of knowledge: if a theory allows a subject to have warranted beliefs from a source without first having (accessible) reasons to think the source is reliable, then—so the objection goes—it will also tend to allow the subject to acquire knowledge that the source is reliable by using that very source in a self-validating way. The result looks like “knowledge on the cheap,” and many take it to be a serious defect.

This problem is standardly illustrated with Vogel’s and Cohen’s “gas gauge” pattern. Roxanne trusts her gauge despite having no independent reason to think it’s reliable; she repeatedly forms beliefs of the form “the gauge reads X and the tank is X,” then concludes “the gauge is reading accurately on this occasion,” and finally infers by induction that the gauge is reliable in general. The Stanford Encyclopedia frames this explicitly as the “bootstrapping (or ‘easy knowledge’)” problem and states the central verdict: such reasoning “amounts to epistemic circularity” and seems illegitimate—yet reliabilism appears to sanction it. (Stanford Encyclopedia of Philosophy)

Now, why does this matter for Plantinga’s warrant program in core epistemology?

Because Plantinga’s proper-function account is, by his own lights, a way of capturing the “important truth” in reliabilist approaches—namely, that the property that upgrades true belief to knowledge is truth-linked in an external way—while adding proper function and design-plan constraints. The SEP explicitly classifies Plantinga’s account as a reliabilist-style theory of warrant, and it describes his conditions in the familiar externalist shape: proper function in an appropriate environment, design plan aimed at truth, and a high objective probability of truth. (Stanford Encyclopedia of Philosophy)

So the question becomes: Does adding proper function block bootstrapping, or does the bootstrapping structure survive? The objection says it survives, for a principled reason.

The core structure of the objection

Plantinga’s framework (like reliabilism) is naturally read as endorsing something like this:

(PF) A source can deliver warranted beliefs even if the subject lacks antecedent, accessible reasons to think the source is reliable—so long as it is in fact operating properly in the right environment.

That is not a rhetorical gloss; it’s the functional role of an externalist theory: the warrant-conferring features are largely outside the subject’s reflective perspective.

Now combine (PF) with two very ordinary epistemic principles:

- Deductive transmission: if you have warranted belief in the premises and you competently deduce the conclusion, you can acquire warranted belief in the conclusion.

- Inductive generalization: in the right circumstances, a pattern of warranted particular judgments can support a warranted generalization.

Given those, bootstrapping becomes difficult to avoid.

A Plantinga-shaped bootstrapping scenario

Consider a subject, Sam, with normal perceptual faculties.

- Sam looks at an instrument (or just the world) and forms a belief of the form:

(B1) “It seems that p, and p.”

Under Plantinga’s account, if perception is functioning properly in the appropriate environment, Sam’s belief that p can be warranted; and Sam’s belief about the seeming can also be warranted (introspection/appearance). (Stanford Encyclopedia of Philosophy) - Sam deduces:

(B2) “On this occasion, it is not the case that it falsely seems that p” (or: “my perceptual source delivered accurately this time”). - After many instances, Sam induces:

(B3) “My perceptual source is reliable (in general).”

The SEP’s bootstrapping diagnosis is that a reliabilist theory will ratify exactly this pattern, and that the result is unacceptable because it “sanctions its own legitimacy (no matter what).” (Stanford Encyclopedia of Philosophy) The IEP presses the same point: the process seems epistemically circular, yet the externalist machinery appears to bless it. (Internet Encyclopedia of Philosophy)

The worry for Plantinga is straightforward: if his conditions are sufficient for warrant at stage (B1), and if he allows ordinary deduction/induction to transmit warrant, then (B3) inherits warrant too easily. Proper function doesn’t change the structural problem, because the circularity is not about malfunction; it’s about the source underwriting its own reliability.

Why critics think this is a genuine defect, not a mere intuition pump

The objection isn’t “circular arguments are always bad.” It is narrower:

✓ The bootstrapping pattern seems to let a subject upgrade from first-order warranted beliefs (about mundane propositions) to a second-order claim that the very source is reliable without any independent check—even though the second-order claim is precisely what we ordinarily treat as requiring something beyond “it keeps telling me it’s right.” (Stanford Encyclopedia of Philosophy)

✓ The method appears insensitive to whether the source is actually reliable in any epistemically creditable way. If a person were in a systematically deceptive setup, the same self-ratifying pattern could be executed, and it would still “feel” internally just as smooth. That is why Vogel and Cohen call it “epistemic circularity,” not merely benign circularity: the procedure would be available in environments where it should not confer rational assurance. (Stanford Encyclopedia of Philosophy)

This is also why Cohen formulates the issue as pressure toward a higher-level constraint (often called a KR-style principle): roughly, if a source yields knowledge only if the subject knows the source is reliable, then bootstrapping is blocked—but externalists reject that kind of higher-level requirement precisely because it threatens regress or skepticism. The IEP notes this framing and attributes it to Cohen. (Internet Encyclopedia of Philosophy)

The dilemma for Plantinga-style externalism

Once you see the structure, the critical dilemma is clean:

- Allow bootstrapping: then the account seems to yield “easy knowledge” about the reliability of one’s faculties, which many regard as a reductio of the theory’s handling of higher-level epistemic status. (Stanford Encyclopedia of Philosophy)

- Block bootstrapping by adding a constraint: but then Plantinga must supply a principled rule that stops warrant from “lifting” to reliability claims in these cases without also crippling ordinary inference. This is notoriously hard to do without either:

✓ building in a higher-level internalist requirement (concessive to KR-style pressure), or

✓ adding an exclusion rule that looks stipulative (a “no self-ratification” clause that functions as an external patch).

The SEP’s discussion of bootstrapping emphasizes that the complaint is precisely that the theory “sanctions its own legitimacy,” and that this is a systematic problem for the reliabilist family. (Stanford Encyclopedia of Philosophy) Since Plantinga’s warrant program is explicitly positioned as an improved reliabilist-style account (reliability plus proper function), it inherits the same burden: explain why proper function prevents self-certification, or else accept the cost.

What a rigorous takeaway looks like

A careful statement of the objection (strong, but not over-claimed) is this:

If Plantinga’s proper-function theory allows first-order warrant from perception/memory without antecedent reflective assurance of reliability (as externalism characteristically does), and if warrant transmits through ordinary deduction/induction, then the theory is under pressure from the bootstrapping phenomenon: it appears to permit knowledge (or warranted belief) that one’s faculties are reliable via epistemically circular reasoning. The problem is not merely verbal; it is a challenge to whether the theory can sharply distinguish (i) genuine epistemic credit from (ii) procedures that would “validate” a source from within regardless of whether the subject has any independent purchase on the source’s trustworthiness. (Stanford Encyclopedia of Philosophy)

- Phil: Let’s use an everyday object: your car’s fuel gauge. You glance at it and believe “I have half a tank.”

- Plantinga: Fine. If your perceptual faculties are working properly, that belief can be warranted.

- Phil: You also form the belief “the gauge reads half.” That is just vision again.

- Plantinga: Yes.

- Phil: Now you drive a bit, stop, and the gauge still reads half. You repeat this a few times over a week. Each time you form two beliefs: “the gauge reads X” and “I have X fuel.”

- Plantinga: Right.

- Phil: Then you conclude: “My gauge is reliable.”

- Plantinga: That seems like a reasonable induction from repeated success.

- Phil: Here is the problem: every single “success” premise you used was delivered by the same gauge you are now declaring reliable. You never checked fuel level independently. No dipstick reading, no actual measurement, no odometer-based verification, nothing.

- Plantinga: But if the gauge is in fact reliable, then those beliefs are true and produced by proper function, so they are warranted.

- Phil: Exactly. Your view makes the argument self-certifying. If the gauge is reliable, it gives you warranted premises, which let you infer that it is reliable. That means the gauge can “prove itself” from within.

- Plantinga: If it is reliable, why is that a problem?

- Phil: Because the epistemic issue is not “is the gauge reliable in fact?” It is “does this method give you warrant for the claim that it is reliable?” Your method says yes even when the subject has done nothing that distinguishes a reliable gauge from an unreliable one that merely happens to read correctly in those instances.

- Plantinga: But the unreliable gauge would not keep giving true readings.

- Phil: It might, by luck or by a stable but misleading correlation in the short run. More importantly, from the driver’s perspective, the reasoning pattern is identical in both scenarios. The driver is using the gauge to validate the gauge. That is epistemic circularity.

- Plantinga: So you want an extra constraint: you cannot gain warrant for source reliability using only that source.

- Phil: Yes. But once you add that constraint, your externalist story is no longer doing the work. You are imposing a prohibition on a very ordinary kind of warrant transmission: you can get first-order warranted beliefs from a source, but you cannot use those beliefs to bootstrap to “the source is reliable.”

- Plantinga: Why can’t I accept that bootstrapping is legitimate?

- Phil: Because then “reliability knowledge” becomes too cheap. Any source that happens to be working can certify itself without independent checks. That collapses the difference between having a truth-conducive source and having a rationally grounded assurance that the source is truth-conducive. Your framework either permits easy self-validation or needs an ad hoc barrier to block it. That is the bootstrapping problem in a single dashboard example.

Argument for critique 3: bootstrapping yields “easy warrant” for reliability claims under externalism, conflicting with a plausible anti-circularity constraint

Key predicates and intended readings

Annotation: Subject’s belief that proposition

has Plantinga-style warrant.

Annotation: Propositionis true.

Annotation: Sourceis reliable in the relevant range, meaning its outputs are truth-conducive in normal conditions.

Annotation: On trial, source

outputs proposition

.

Annotation: On trial, source

is accurate, meaning the proposition it outputs on that trial is true.

Annotation: Subject’s belief that

is formed via source

, with no independent truth-check of

that is not mediated by

.

Annotation: Subject’s warrant for

essentially depends on source

, meaning

is an indispensable component of the evidential route to

.

Annotation: For eachwith

, subject

has warranted belief that

.

Annotation: Subject’s warrant for

is obtained by the bootstrapping pattern, meaning it is inferred from a batch of

claims whose warrant essentially depends on

.

Core premises

Annotation: Externalist warrant principle: ifis true and formed via properly functioning

in normal conditions, then

is warranted for

even without prior warrant for

.

Annotation: If’s belief that

is via

in the relevant way, then

is an essential dependence in

’s warrant for

.

Annotation: Accuracy entailment: ifoutputs

on trial

and

is true, then

is accurate on that trial.

Annotation: Conjunction closure for warrant.

Annotation: Deductive transmission of warrant.

Annotation: Essential-dependence transmission: if an inference fromto

is warranted, and

essentially depends on

, then

essentially depends on

as well.

Annotation: Inductive generalization schema: sufficiently many warranted accuracy instances support warranted belief in.

Annotation: Ifhas a batch of warranted accuracy claims and each depends essentially on

, then the reliability conclusion is obtained by bootstrapping.

Annotation: Anti-circularity constraint: bootstrapping does not confer warrant for.

Existence setup for the bootstrapping pattern

Annotation: There is a subject, a source

, and a finite run of trials such that

initially lacks warrant for

, yet on each trial

the source outputs some true proposition

,

believes

via

, and

also has warrant that

output

on that trial.

Derivation

Annotation: Fix a particular subject, source

, and run-length

satisfying the existence claim.

Annotation: Unpack the trial structure for the fixed witnesses.

Annotation: Work within one representative trial; the argument generalizes to allin the run.

Annotation: Instance of (10) for the chosen.

Annotation: From (22) and (23), the output propositionis warranted for

even though

lacks prior warrant for

.

Annotation: From (22) and (24), both the meta-output claim and the output proposition are warranted.

Annotation: From (13) and (25), the conjunction is warranted.

Annotation: Instance of (12); together withit links the output to accuracy.

Annotation: Bridge step: treatingas the proposition asserted,

suffices for

in the object-language schematic; this is the standard simplification used in bootstrapping formalizations.

Annotation: The entailment in (28) is warrant-preserving as a logical truth of the schema.

Annotation: From (14), (26), and (29), the accuracy claim for trialis warranted.

Annotation: Instance of (11) for the chosen.

Annotation: From (22) and (31), the warrant foressentially depends on

.

Annotation: Sinceis an essential dependence on

, any warranted conjunction with

also essentially depends on

; this is an instance of (15) using the tautology

.

Annotation: From (15), (33), and the warranted entailment in (29), the accuracy claim for trialessentially depends on

.

Annotation: Generalize the trial-level reasoning in (30) and (34) across the whole run.

Annotation: By definition ofand (35),

has a batch of warranted accuracy claims for

.

Annotation: From (16) and (36), induction yields warranted belief in.

Annotation: From (36) and (35), with witness, the bootstrapping antecedent holds.

Annotation: From (17) and (38), the reliability conclusion is obtained by bootstrapping.

Annotation: From (18) and (39), anti-circularity rules out warrant for.

Annotation: Contradiction, since (37) giveswhile (40) gives

.

Conclusion

Annotation: The package consisting of externalist warrant for source outputs, standard transmission principles, inductive generalization to reliability, the anti-circularity constraint, and the existence of a normal run of true outputs cannot all be true together. Therefore, unless Plantinga-style externalism rejects or restricts some transmission or inductive step, it faces the bootstrapping problem: it predicts that bootstrapping can yield warranted reliability beliefs, which conflicts with the anti-circularity constraint.

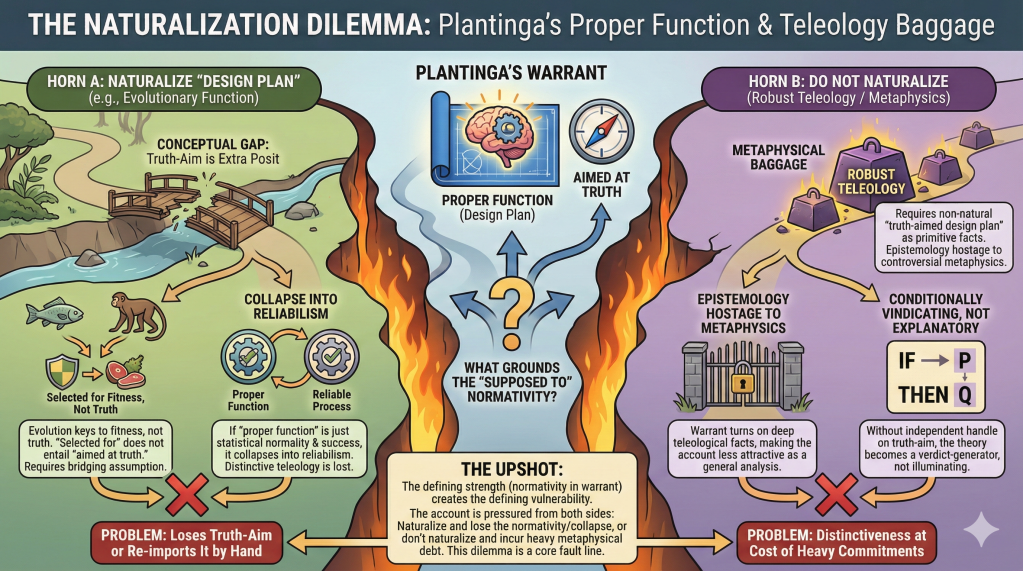

4) Design plan / proper function: the naturalization dilemma and teleology baggage

Plantinga builds warrant around proper function: a belief is warranted only if produced by faculties functioning as they are supposed to function under a design plan aimed at truth, in the right sort of environment, with a high objective probability of truth. The core objection is a dilemma about what grounds that “supposed to” normativity. If the “design plan” is naturalized (e.g., via evolutionary function), then either (a) the view risks collapsing into a more ornate form of reliabilism (the distinctive work is again done by reliability), or (b) it fails to secure Plantinga’s crucial requirement that the plan is aimed at truth rather than merely fitness or successful behavior. If, instead, “design plan aimed at truth” is not naturalized, then the account’s analysis of knowledge depends on robust teleological/metaphysical commitments (the sort of background story that many epistemologists treat as optional), making warrant—and thus knowledge—hostage to controversial assumptions about purposiveness and truth-aim.

Plantinga’s proper-function account makes warrant depend on more than reliability. At a first approximation, a belief is warranted only if it is produced by cognitive faculties that are functioning properly in an appropriate environment, where “proper function” implies a design plan, and the relevant segment of that design plan is aimed at truth. (Stanford Encyclopedia of Philosophy) This is not an incidental flourish; it is the structural core of the view.

The most-cited core-epistemology pressure point is that this machinery generates a dilemma about what, exactly, grounds the normative notions doing the work—“proper,” “design plan,” “aimed at truth,” “good design”—and whether the theory can keep its distinctive explanatory advantages without importing controversial metaphysics.

The dilemma is simple to state: either “design plan / proper function” is naturalized, or it isn’t. Either way, critics argue, a serious cost follows.

Horn A: Naturalize “design plan” (e.g., in broadly evolutionary/etiological terms).

Plantinga explicitly allows that “design plan” need not involve a conscious designer; a design plan can be modeled in functional terms, and one might hope evolution can supply it. (Internet Encyclopedia of Philosophy) But once you go this route, two core worries arise.

✓ Truth-aimedness becomes an extra posit, not something delivered by naturalized function. A standard line of criticism is that evolutionary function is fundamentally keyed to fitness, not truth. That creates a conceptual gap: even if evolution can explain why we have stable cognitive dispositions, it does not by itself yield the normative claim Plantinga needs—namely, that the relevant cognitive modules are supposed to produce true beliefs as their function (rather than, say, fitness-enhancing representations that can diverge from truth in systematic ways). In other words, “selected for” does not straightforwardly entail “aimed at truth,” yet Plantinga’s warrant condition requires precisely that aim. (Stanford Encyclopedia of Philosophy)

✓ Once naturalized, proper function threatens to collapse into (or closely approximate) reliabilism. If “proper function” is cashed out as “operating in the statistically normal way for this evolved system in its normal range,” and “good design” is cashed out by success rates, then the added “design plan” layer can look like a complicated route back to a reliability story—precisely the family of theories Plantinga is often said to refine rather than replace. (This is a common framing in overviews: proper functionalism is treated as a reliabilist-descended theory with additional constraints.) (Stanford Encyclopedia of Philosophy)

If this collapse happens, the distinctive metaphysical vocabulary has purchased little explanatory gain, while leaving you with the standard externalist burdens (e.g., easy-knowledge/bootstrapping pressures).

So on Horn A, the critic’s verdict is: you either don’t get the truth-aimed normativity you need, or you re-import it by hand—at which point you’ve slid toward Horn B.

Horn B: Do not naturalize; treat “design plan aimed at truth” as primitive (or as grounded in robust teleology).

If “aimed at truth” is not secured by a naturalized account of function, the theory’s warrant conditions rest on a thick normative base: there is a fact of the matter about what our faculties are for, and that purpose is truth-directed in the relevant way. (Stanford Encyclopedia of Philosophy) The criticism here is not that teleology is incoherent; it’s that the epistemology now depends on contentious metaphysical commitments that many philosophers regard as optional at best, and question-begging at worst.

✓ Epistemology starts to look hostage to metaphysics. If warrant requires a truth-aimed design plan, then whether ordinary humans have warrant turns on deep facts about the kind of teleology in play. That makes the account less attractive as a general analysis of knowledge for a broad audience, because it ties a core epistemic notion to a controversial background story about normativity and function.

✓ The account risks becoming “conditionally vindicating” rather than explanatory. If the theory can always say “when the relevant design plan is truth-aimed and functioning properly, the belief is warranted,” critics press: what non-question-begging resources does the theory give us for determining when those conditions obtain? Without an independent handle on “truth-aimed design plan,” the view risks functioning like a verdict-generator rather than an illuminating explanation.

The upshot (as a core epistemology objection):

The proper-function program’s defining strength—building normativity into warrant rather than treating warrant as mere reliability—also generates its defining vulnerability. Unless “design plan” and “aimed at truth” are (i) specified in a way that is independent of “this is knowledge/warrant,” and (ii) grounded in a way that does not either collapse into ordinary reliabilism or import heavyweight teleology, then the account is pressured from both sides:

✓ naturalize it → you lose (or must re-add) the truth-aimed normativity, and you risk collapsing back into reliabilism (Stanford Encyclopedia of Philosophy)

✓ don’t naturalize it → you retain distinctiveness, but at the cost of metaphysical commitments many epistemologists won’t grant as part of an analysis of knowledge (Stanford Encyclopedia of Philosophy)

That dilemma—more than any single counterexample—is why “design plan / proper function” remains one of the most-cited fault lines in evaluations of Plantinga’s warrant program in core epistemology.

- Phil: Let’s move from barns and gauges to a very ordinary concept: a smoke detector. It beeps when there is smoke.

- Plantinga: Fine. It has a function and a design plan.

- Phil: Now tell me what makes it correct to say the detector is supposed to detect smoke.

- Plantinga: Because that is what it was designed for.

- Phil: Good. Now translate that to humans. You say our cognitive faculties are supposed to aim at truth because they operate under a design plan aimed at truth.

- Plantinga: Yes. Proper function is defined relative to that plan.

- Phil: Here is the fork. Either that “design plan aimed at truth” is explained in purely natural terms, or it isn’t. Which is it?

- Plantinga: It can be naturalized. Evolution could supply a design plan in the relevant sense.

- Phil: If you naturalize it by evolution, then “what the system is for” is fixed by selection pressures. But selection pressures aim at survival and reproduction, not truth. A system can be excellent for survival while being systematically biased about truth. So where does truth-aim come from on your naturalized story?

- Plantinga: Perhaps truth is generally advantageous.

- Phil: Sometimes, but not always, and that is the point. To get your warrant condition, you need more than “often correlated with survival.” You need “aimed at truth in the relevant range.” That is a normative property, not guaranteed by evolutionary function. So either you add a bridging assumption that evolution delivers truth-aim, or you do not.

- Plantinga: Suppose I add the bridging assumption.

- Phil: Then your epistemology now depends on a controversial metaphysical thesis: that the natural story grounds a normatively truth-aimed design plan. That is extra theoretical baggage, and it is not epistemology-neutral.

- Plantinga: Alternatively, I could say the design plan is not fully naturalizable.

- Phil: Then you are carrying even heavier baggage: the truth-aimed design plan exists as a robust teleological fact not reducible to natural function. Either way, your analysis of knowledge is hostage to metaphysics that many epistemologists are not going to grant.

- Plantinga: But I need design plan language to distinguish proper function from lucky reliability.

- Phil: Here is the other horn. If you weaken the design-plan condition so that it just means “the system tends to produce true beliefs in normal conditions,” then your theory collapses into a dressed-up reliabilism. The distinctively teleological terms stop doing real work.

- Plantinga: So you are saying I must choose between metaphysical load and collapse.

- Phil: Exactly. In the smoke-detector case, “designed for smoke” has a clear grounding: intentional design. For humans, if you remove intentional design, you either fail to secure truth-aim or you import it as a contentious extra. And if you don’t remove it, then the account is no longer a general epistemology but a theory with a built-in metaphysical commitment. That dilemma is the flaw.

Argument for critique 4: the design-plan requirement yields a dilemma between metaphysical load and collapse into reliabilism

Key symbols and intended readings

Annotation: Subject’s belief that proposition

has Plantinga-style warrant.

Annotation: Subject’s belief that proposition

has reliabilist-style warrant, meaning the belief is produced by a reliable process in the relevant setting.

Annotation: The design plan governing normal human cognitive faculties in the relevant range.

Annotation: The design planis aimed at truth in the sense required by Plantinga’s warrant program.

Annotation: The design planis fully naturalizable, meaning its normativity is fixed entirely by naturalistic etiology or function facts.

Annotation: A bridging principle connecting naturalistic function facts to truth-aim, defined as.

Annotation: Metaphysical-load condition, meaning the account requires an extra non-neutral commitment about truth-aim or teleology beyond a purely naturalistic story.

Annotation: Collapse condition, meaning Plantinga warrant becomes extensionally equivalent to reliabilist warrant, defined as.

Core premises

Annotation: Non-vacuity: there is at least one warranted belief on Plantinga’s account.

Annotation: Plantinga-style truth-aim requirement: any instance of Plantinga warrant presupposes that the governing design planis truth-aimed.

Annotation: Exhaustive options: eitheris fully naturalizable or it is not.

Annotation: If something is both naturalizable and truth-aimed, then a bridging claim of the formis required to connect the naturalistic base to the truth-aim property.

Annotation: If the bridging principleis needed, the view carries metaphysical load because truth-aim is not delivered by the naturalistic base alone.

Annotation: Ifis not naturalizable, the view carries metaphysical load because it relies on non-natural teleology or a non-natural normative fact about

.

Annotation: If the truth-aim requirement is dropped, the distinctive design-plan component no longer constrains warrant beyond reliability, so the view collapses into a reliabilist equivalent.

Derivation

Annotation: From (9) and (10): since someholds, the truth-aim condition

must hold.

Lemma:

Annotation: Begin a conditional proof for the lemma.

Annotation: Case 1 for (11):is naturalizable.

Annotation: From (12) instantiated at, using the assumptions

and

, the bridging principle

is required.

Annotation: From (13) and (19), metaphysical load follows in the naturalization case.

Annotation: Case 2 for (11):is not naturalizable.

Annotation: From (14) and (21), metaphysical load follows in the non-naturalization case.

Annotation: From (11) together with the two cases (18)–(20) and (21)–(22), conclude the lemma.

Main conclusion as a dilemma

Annotation: Contrapositive of (23): if there is no metaphysical load, then the truth-aim condition cannot be maintained.

Annotation: From (24) and (15): if there is no metaphysical load, then droppingforces collapse.

Annotation: From (25) by classical equivalence: either the warrant program carries metaphysical load, or else it collapses into a reliabilist equivalent.

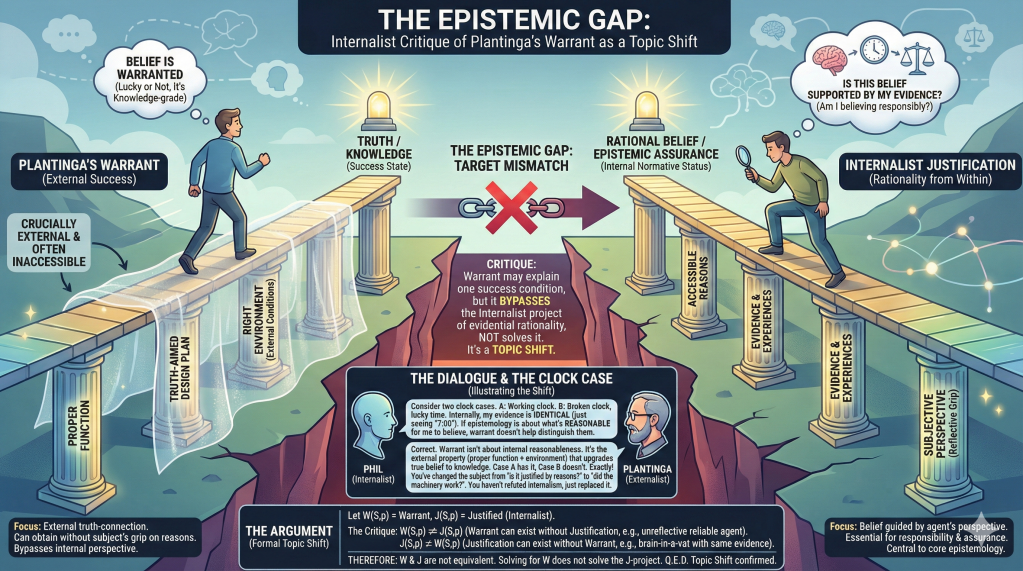

5) The internalist/evidentialist complaint: Plantinga brackets “reasons” rather than accounting for them

Plantinga’s warrant program is designed to explain the extra property that upgrades true belief to knowledge, and it does so externally: warrant depends on proper function, the right environment, and a truth-aimed design plan—facts that can obtain even when the subject lacks any reflective grip on them. Internalists and evidentialists argue that this leaves out what many take to be the central normative dimension of core epistemology: whether the belief is supported by the subject’s evidence/reasons (or is reasonable from the subject’s cognitive position). The pressure is not “external factors never matter,” but that Plantinga’s account can declare a belief warranted even when the subject lacks the kind of epistemic assurance that distinguishes rationally grounded belief from mere fortunate reliability; as Fumerton stresses, externalist warrant can leave a subject unable to tell (given their reasons) whether they are in a deception scenario, thereby failing to deliver the sort of rational security many think epistemology should explain.

Plantinga’s warrant program is explicitly externalist in structure: the property that upgrades true belief to knowledge depends crucially on factors that need not be accessible from the subject’s perspective—e.g., whether one’s faculties are functioning properly, whether the environment is the right sort, and whether the relevant design plan is truth-aimed. (Internet Encyclopedia of Philosophy)

The internalist/evidentialist objection is best formulated as a target-mismatch complaint rather than a direct refutation. Internalists about epistemic justification hold (in one influential family of views) that justificatory status supervenes on what is “internal” to the subject—roughly, their accessible reasons, evidence, experiences, or perspective. (Stanford Encyclopedia of Philosophy) Externalists deny this and insist that what matters for the epistemic status most closely tied to knowledge is the kind of truth-connection that internal duplicates (e.g., brain-in-a-vat doppelgängers) may lack. (Stanford Encyclopedia of Philosophy)

Now the core complaint against Plantinga, as a core epistemology theory, is not “external factors never matter.” It’s this:

- Plantinga’s account can deliver “warrant” without delivering what many call epistemic justification (in the internalist/evidentialist sense).

Plantinga is clear that one can be “within one’s epistemic rights” (a deontological or responsibility-like notion) without having warrant, and conversely (by the lights of his critics) one can have his warrant without having the kind of reflectively available support that internalists identify with justification. (Internet Encyclopedia of Philosophy) - But justification, on the internalist picture, is not an optional side-issue; it is the central normative notion.

On this view, epistemology is not only about identifying a truth-linked property out in the world; it is also about what it is rational (or evidentially supported) for a subject to believe given their cognitive position. That is why internalists characterize justification in terms of internal reasons and why the internalism/externalism dispute persists as a dispute about what epistemic evaluation fundamentally is. (Stanford Encyclopedia of Philosophy) - So: even if Plantinga is right about a truth-conducive property relevant to knowledge, he may have changed the subject relative to a central epistemological aim.

If you care about a notion that guides belief from the agent’s perspective—what one should believe given one’s evidence—then an external property that can vary between internal duplicates looks like the wrong kind of object. SEP’s standard presentation of the debate makes this contrast explicit: externalists want the likelihood-of-truth needed for knowledge; internalists insist that internal perspective conditions are epistemically fundamental. (Stanford Encyclopedia of Philosophy)

A sharper way to press the point is via philosophical assurance. Fumerton argues that externalist accounts can leave a subject lacking the kind of assurance that epistemology ought to provide: you might have warrant (because your faculties are, in fact, working properly in a good environment) while being unable—given your evidence—to tell whether you’re in a deception scenario, which means the theory fails to connect knowledge with the reflective security we ordinarily take to be epistemically significant. (myweb.uiowa.edu) This is not mere “internalist preference.” It is a substantive claim about what an adequate theory of epistemic status should do: it should not merely sort beliefs into a success category from the outside; it should also explain the status of belief as answerable to reasons from within the subject’s point of view. (Stanford Encyclopedia of Philosophy)

Plantinga can reply (and does, in effect): that’s not what warrant is for—warrant is the knowledge-making ingredient, not the internally accessible notion of being reasonable. But the objection survives that reply in its strongest form: it concludes not “Plantinga is incoherent,” but “Plantinga has not provided what many epistemologists were after when theorizing justification/knowledge.” In other words, the warrant program may be a theory of one important success condition, yet still be incomplete as a core epistemology if core epistemology is supposed to account for evidential rationality, responsibility to reasons, and the kind of assurance internalists treat as non-negotiable. (Stanford Encyclopedia of Philosophy)

- Phil: Let’s use a normal human scenario: you wake up groggy in a hotel room you’ve never been in. Everything feels familiar, but you’re disoriented. You see a digital clock that says 7:00.

- Plantinga: You form the belief “it is 7:00.”

- Phil: Right. Now imagine two cases. In Case A, the clock is functioning and accurate. In Case B, the clock is broken but happens to display the correct time at that moment.

- Plantinga: In Case A, the belief is produced by a truth-conducive source; in Case B, it is lucky.

- Phil: Good. Now add a twist. In both cases, you have the same internal perspective: you have no background on the clock, no independent check, and no reasons to think it is reliable. You simply see “7:00” and accept it.

- Plantinga: If your faculties are functioning properly and the environment is right, then in Case A you can have warrant.

- Phil: Exactly. But here is the internalist point: from your point of view, you have no better reasons in Case A than in Case B. The evidence you have is identical. So if we are doing core epistemology about what it is reasonable to believe given your evidence, your story has not answered that. It has changed the subject.

- Plantinga: I am analyzing warrant, the property that makes true belief knowledge, not internal reasonableness.

- Phil: That is the admission. You are not explaining what many epistemologists mean by justification: being supported by accessible reasons. You are offering an external success condition.

- Plantinga: But internalism cannot distinguish Case A from Case B.

- Phil: Correct, and that is precisely why internalists say their notion is not a truth-guarantee but a perspective-sensitive standard of rational belief. You can criticize that standard, but you cannot claim your account has solved it. You have simply replaced “is this belief supported by my reasons?” with “did my cognitive machinery in fact connect me to the truth?”

- Plantinga: Why think the first question is essential?

- Phil: Because ordinary epistemic evaluation often turns on whether someone believed responsibly given what they had to go on. If you and I are jurors and you accept a claim because it feels right while having no accessible support, we can evaluate that as irrational even if it turns out true. Your theory can label it warranted if the external conditions line up, but that does not address the internalist target.

- Plantinga: So your complaint is that my view does not capture rationality-from-within.

- Phil: Exactly. Your framework can say, “you had warrant because the world cooperated and your faculties functioned properly,” while leaving untouched the question, “did you have good reasons?” That is the gap. If the goal is the internalist justification project, your account does not refute it; it bypasses it.

Argument for critique 5: Plantinga-style warrant does not capture internalist justification, so it cannot settle the internalist reasonableness project

Key predicates and intended readings

Annotation: Subject’s belief that proposition

has Plantinga-style warrant, determined by proper function, truth-aimed design plan, appropriate environment, and no undefeated defeaters.

Annotation: Subjectis justified in believing

in the internalist or evidentialist sense, meaning

is supported by

’s accessible reasons or evidence.

Annotation: The justification project, defined as the task of giving conditions that correctly classify whenholds.

Annotation: The claim that Plantinga’s program, denoted, solves

by using

as the relevant replacement property for

.

Adequacy bridge for what it would mean to solve the justification project by appeal to Plantinga’s warrant

Annotation: Iftruly solves the justification project by appeal to

, then

must match

extensionally across cases, since otherwise the project has not been solved but replaced by a different target.

Core independence premises used in the topic-shift critique

Annotation: There are cases where internalist justification is preserved while Plantinga’s warrant fails, for example, internal duplicates in globally deceptive environments where the subject’s accessible evidence is the same as in normal life but the external conditions required byare not met.

Annotation: There are cases where Plantinga’s warrant can hold without internalist justification, for example, agents whose faculties function properly and yield truth-conducive beliefs but who lack the internal access or evidential perspective demanded by strict internalist criteria for.

Derivation

Annotation: From (6): since someoccurs without

,

does not generally entail

.

Annotation: From (7): since someoccurs without

,

does not generally entail

.

Annotation: From (8) and (9): if neither direction of entailment holds universally, thenand

are not extensionally equivalent.

Annotation: From (5) and (10) by modus tollens: if solvingvia

requires equivalence, and equivalence fails, then

does not solve the internalist justification project.

Conclusion stated in the intended philosophical form

Annotation: Therefore, even ifis valuable for analyzing knowledge-conducive success, it does not answer the internalist or evidentialist question of what makes a belief justified from the subject’s accessible evidential position, so appealing to

to settle that dispute constitutes a topic shift rather than a resolution.

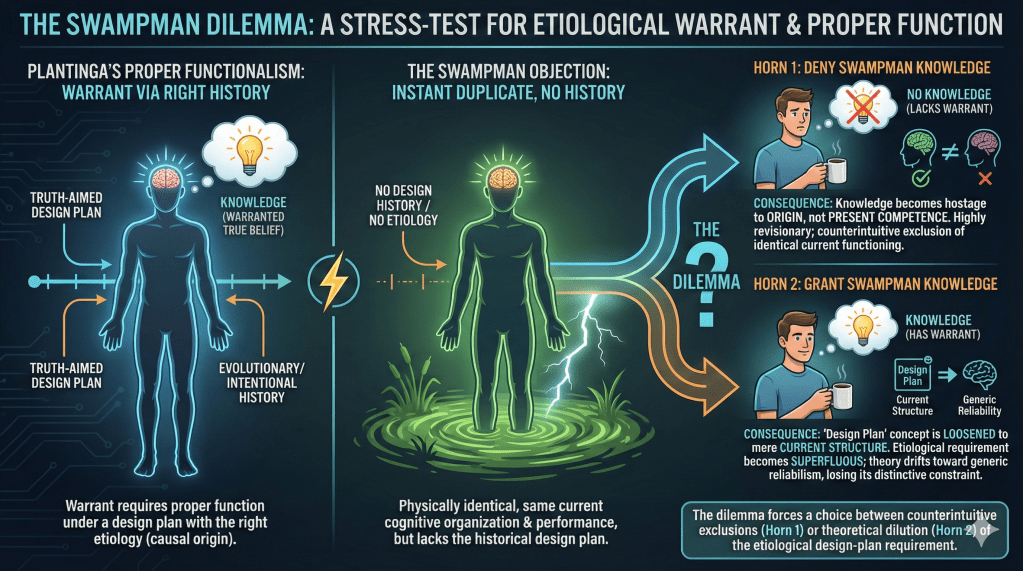

6) Etiology and Swampman: does warrant require the right causal history?

Plantinga-style proper functionalism ties warrant to a subject’s truth-aimed design plan and proper function, which naturally suggests an etiological dependence: the relevant “function” is the one conferred by the right kind of design history. The Swampman objection exploits this: imagine a molecule-for-molecule duplicate with the same present cognitive organization and performance, but no design history at all—a paradigmatic “designless” agent who still seems able to form ordinary perceptual and inferential beliefs in knowledge-like ways. This forces a dilemma. If you deny Swampman knowledge, you commit to the highly revisionary claim that present cognitive excellence is insufficient—knowledge can hinge on origin facts even when current epistemic performance is identical. If you grant Swampman knowledge, you either concede that proper function (in Plantinga’s design-plan sense) is not necessary, or you loosen “design plan” until it attaches to mere current organization—at which point the design-plan requirement threatens to lose its distinctive constraint and drift toward a generic reliability/competence story.

Plantinga’s proper-function theory builds etiology into warrant. In outline: a belief has warrant only if it is produced by cognitive faculties that are functioning properly, in an appropriate environment, according to a truth-aimed design plan. (Internet Encyclopedia of Philosophy)

The Swampman objection targets the necessity of that design-plan/etiological component. Davidson’s Swampman case (and its many epistemic variants) describes a molecule-for-molecule duplicate of a normal human that appears “all at once,” with no evolutionary or intentional design history, yet proceeds to perceive, reason, and form beliefs in the same outwardly competent way as the original. The pressure is immediate: if internal duplicates can be equally competent now, why should one have knowledge and the other not, merely because of how they came into existence? That is the nerve this objection touches.

Plantinga himself recognizes the threat: if Swampman has warranted beliefs (and thus knowledge), then proper functionalism looks false because Swampman lacks a design plan of the relevant sort. (andrewmbailey.com) This is why the Swampman literature is often framed as a dilemma for proper functionalism. (PhilPapers)

The dilemma

Horn 1: Deny that Swampman has warrant (and thus deny that Swampman has knowledge).

Given Plantinga’s necessity claim, this is the straightforward verdict: no design plan → no proper function (in the normatively relevant sense) → no warrant. (andrewmbailey.com)

But critics argue this horn exacts a steep price:

- Knowledge becomes hostage to origin rather than present cognitive competence. Two agents could be indistinguishable in current cognitive operation—same perceptual discrimination, same inferential competence, same memory behavior—yet differ radically in epistemic status because one has the “right” history and the other doesn’t. That strikes many as the wrong dependency: epistemic evaluation should track whether the belief is formed well now, not whether the believer has the right pedigree.

- The verdict looks extensionally implausible in mundane cases. Swampman (or “instant adult duplicate”) seems able to know trivial propositions upon opening his eyes—e.g., that there is a tree before him, that he has hands, that 2+2=4—if those beliefs are formed in the same way normal humans form them. Denying all of this looks like a radical revision of ordinary epistemic classification, not a small theoretical sacrifice.

Plantinga’s reported response to this kind of pressure includes the move that perhaps Swampman is not metaphysically possible (so the intuition pump is defanged), or at least that the case doesn’t trouble the theory in the intended way. (JSTOR) But that response shifts the debate from epistemology to modal/metaphysical commitments: the theory’s safety from counterexample now depends on whether the scenario is genuinely possible, not on whether the epistemic principles are well-motivated.

Horn 2: Grant that Swampman has warrant by loosening what counts as a “design plan.”

Proper functionalists who feel the pull of the Swampman intuition often respond by broadening “design plan” so that it can be instantiated by a system’s present organizational/functional structure, even without the “right” etiology. The aim is to say: Swampman does have a design plan, because the relevant normativity supervenes on current functional organization rather than historical selection or intentional design.

Critics argue this horn also has a serious cost:

- The design-plan condition risks becoming explanatorily superfluous. If anything with the right internal organization automatically counts as having the relevant design plan, then “design plan” stops doing distinctive work. You are close to saying: if the process is reliable/competent in the environment, it yields warrant—i.e., you slide toward a reliabilist (or broadly success-based) story, and the design-plan layer is no longer a principled constraint. This is exactly the kind of “superfluity” worry that the Swampman dilemma literature emphasizes. (PhilPapers)

- You blunt Plantinga’s key argument for why proper function is necessary. Plantinga’s motivation for proper function is partly that it distinguishes genuine warrant from cases where a belief-forming method happens to spit out truths by accident; “design plan” is supposed to anchor the normative difference between functioning well and mere lucky success. (andrewmbailey.com) If you weaken design-plan talk until Swampman qualifies simply by having the right current structure, critics will ask why you still need the design-plan apparatus at all, rather than a direct anti-luck or virtue-theoretic condition.

What the objection establishes (and what it doesn’t)

The Swampman argument is not a knockdown refutation all by itself; it is a focused stress-test on Plantinga’s claim that design-plan proper function is necessary for warrant.

✓ If you judge (as many do) that a Swampman-like duplicate could have knowledge immediately, then Plantinga’s necessity claim looks too strong: it makes epistemic status depend on historical etiology in a way that fails to track present epistemic performance. (PhilPapers)

✓ If you deny that Swampman could know, you can preserve Plantinga’s necessity claim, but you are committed to an epistemology on which vast differences in epistemic status can hinge on origin facts even when present cognitive functioning is identical—a view many see as a theoretical overreach. (PhilPapers)

✓ If you loosen “design plan” to save Swampman’s knowledge, you risk hollowing out the distinctive explanatory role that design plans were introduced to play. (PhilPapers)

That’s why this objection is so persistent in the core epistemology literature: it forces proper functionalism to choose between counterintuitive exclusions and theoretical dilution, and neither option is cost-free.

- Phil: Let’s use an everyday sci-fi scenario that still feels intuitive. Imagine a lightning strike in a lab accidentally assembles a perfect molecule-for-molecule duplicate of you. Call him Swamp-Phil.

- Plantinga: A Swampman case, yes.

- Phil: Swamp-Phil opens his eyes, looks at a coffee mug on the table, and forms the belief “there is a mug in front of me.” Same visual system, same processing, same immediate experience as you would have.

- Plantinga: He is an internal duplicate, yes.

- Phil: Now you say warrant requires proper function relative to a design plan, and that design plan is tied to the right kind of history. Swamp-Phil has no such history.

- Plantinga: Correct. There is no design plan governing him in the relevant sense.

- Phil: Then on your view, Swamp-Phil cannot have warrant for “there is a mug,” and therefore cannot know it, even though his cognition is functioning exactly as yours is functioning in that moment.

- Plantinga: That seems to follow.

- Phil: But that is the flaw. We normally treat knowledge as tracking present cognitive contact with reality, not the origin story of the believer. If Swamp-Phil sees the mug clearly, why does he lack knowledge while you have it?

- Plantinga: Because proper function is a normative notion grounded in a design plan, and he lacks that grounding.

- Phil: Then your account forces a deeply revisionary result: two agents identical in current cognition differ in knowledge solely because one has the right backstory and the other doesn’t. That makes knowledge hinge on etiology rather than on epistemic performance.

- Plantinga: Perhaps Swamp-Phil is not really possible.

- Phil: If you block the case by denying possibility, you are no longer defending the epistemology directly. You are defending it by a modal escape hatch. The point of the case is that if such a being were to exist, we would still say he knows mundane facts immediately upon perceiving them.

- Plantinga: Alternatively, I could loosen the notion of design plan so that it applies to him.

- Phil: And then the design-plan requirement stops doing its distinctive work. If any system with the right internal organization automatically counts as having a truth-aimed design plan, you are drifting toward a generic reliability or competence view.

- Plantinga: So either I deny Swamp-Phil knowledge or I dilute design plan.

- Phil: Exactly. That is the dilemma. Either accept an implausible verdict about Swamp-Phil, or weaken the design-plan condition until it loses explanatory bite. The Swampman case forces that choice, and that is why the etiology requirement is a liability.

Argument for critique 6: Swampman creates a dilemma for any warrant theory that makes the right etiology necessary

Key predicates and intended readings

Annotation: Subject’s belief that proposition

has Plantinga-style warrant.

Annotation: Subjectknows that

.

Annotation: Propositionis true.

Annotation: Subjecthas the right etiological history for proper function in Plantinga’s design-plan sense, meaning there is a governing design plan with the right kind of origin, and

falls under it.

Annotation: Subjectis Swampman, meaning

is a molecule-for-molecule duplicate of a normal human but lacks any relevant design history.

Annotation:and

are internal duplicates at the time of belief formation in the relevant respects for basic cognition, meaning same functional organization and local operation.

Annotation:is a basic proposition of the sort ordinary perception can deliver, such as a simple object-present claim.

Core premises

Annotation: Knowledge entails truth and Plantinga-style warrant, since warrant is the ingredient that upgrades true belief to knowledge.

Annotation: Etiology necessity: Plantinga-style warrant requires that the subject be under the right design plan history, captured by.

Annotation: Swampman exists and, by stipulation of the case, lacks the relevant etiological history.

Annotation: Any Swampmanis an internal duplicate of some ordinary human

who does have the relevant design history.

Annotation: There exists an ordinary humanwith the right history who knows some basic proposition

.

Annotation: Present-competence parity: ifis an internal duplicate of

, then for basic propositions

, whatever counts as knowledge for

should also count as knowledge for

, because their current cognition relevant to

is the same.

Derivation to contradiction

Annotation: Fix a particular Swampmanwith no relevant history.

Annotation: From (11) and, there is an ordinary human duplicate

of

who has the right history.

Annotation: Fix one such ordinary duplicate.

Annotation: Fix an ordinary humanand a basic proposition

such that

knows

.

Annotation: We align the ordinary knower with the duplicate of Swampman for the case at hand, which is exactly what Swampman thought experiments intend.

Annotation: From (13), (16), and (18): sinceduplicates

and

is basic,

’s knowledge of

transfers to

.

Annotation: Instance of (8) forand

.

Annotation: From (19) and (20): Swampmanhas Plantinga-style warrant for

.

Annotation: Instance of (9) forand

.

Annotation: From (21) and (22): Swampmanmust have the right etiological history.